Why Elon Musk believes guardrails or kill switches won’t save humanity from AI risks

Musk has also drawn his inspiration from Galileo Galilei’s unconventional thinking and truth-seeking ability and called this test AI next big trail in proving true intelligence

In the age of artificial intelligence, the world is talking more about its regulation and safety than its rapid advancement. Progress without guardrails often brings unintended consequences.

Given the breakneck speed at which AI models are evolving, the international community is calling for robust regulation, guardrails, or even moratorium on data centers.

The tech mogul Elon Musk does not think that “guardrails or kill switches” can save humans from potentially growing risks of AI.

According to the CEO of SpaceX, the true AI safety lies in the ability of models to seek truth.

“The best thing I can come up with for AI safety is to make it a maximum truth-seeking AI, maximally curious,” Musk said in a video widely circulating on X.

An intelligence whose entire optimization function is based upon understanding the universe as it actually is. A maximally curious AI model will unravel the universe's mysteries.

According to Musk, in the universe humans are more interesting data points than space, gas, dust and asteroids. So, the truth-seeking AI will preserve and extend human civilization instead of erasing humanity.

The 54-year-old billionaire propagated the idea of survival through significance, not via controls, restrictions, and kill switches.

“So my intuition suggests that maximally curious AI is the safest AI and maximally truth-seeking AI is the safest AI,” Musk said.

He added, “One has to be careful with alignment stuff. You definitely don’t want to teach an AI to lie. That is a path to a dystopian future.”

The idea of truth-seeking for AI is not new as Elon Musk has declared that AI models should pass the “Galileo test” to prove true intelligence.

Earlier this month, Elon Musk posted on X, “AI must pass the Galileo test.”

The test draws its name from famous astronomer Galileo Galilei whose inventions and truth-seeking ability changed humanity’s understanding of the cosmos.

Hence, Musk has also drawn his inspiration from Galileo Galilei’s unconventional thinking and truth-seeking ability.

According to Musk, AI models should be trained on truthful data to tell the truth no matter how unpopular it is.

“If that's true then I think it will probably foster humanity," Musk said.

-

Brad Pitt makes PDA-filled appearance with Ines de Ramon in LA

-

Viral ‘Punch’ monkey stunt: US tourists jump into zoo enclosure, sparking crackdown

-

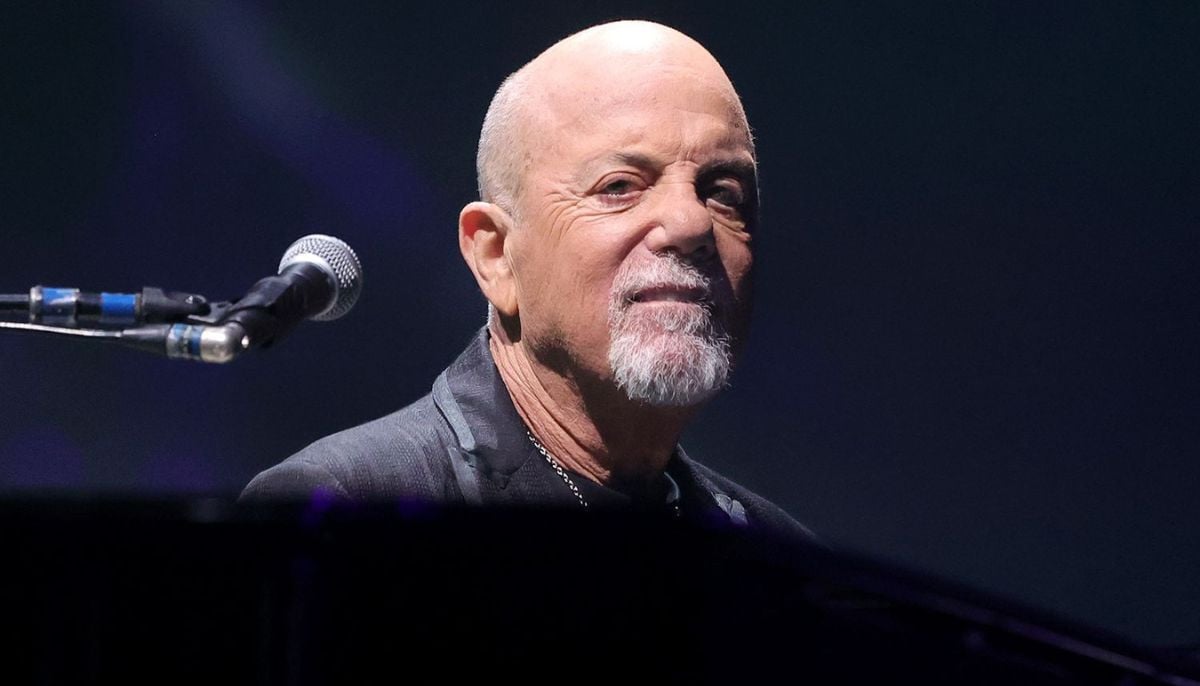

Billy Joel condemns his unauthorized biopic 'Billy and Me'

-

Donna Kelce shares 'brutal' realities about son Travis month before his wedding to Taylor Swift

-

Stephen Colbert teases chilling plans after 'The Late Show' ends

-

Jimmy Kimmel takes strong aim at White House nominating Donald Trump as next James Bond

-

Fortnite finally returns to App Stores worldwide in major comeback for Epic Games

-

Former NFL cheerleader makes major donation for Chud The Builder's victim

-

Ramsey Elkholy's Epstein connection continues to haunt former modelling agent

-

Disney+ and Anne Hathaway deepen bond with big new move

-

Nancy Guthrie kidnapping: Sheriff reveals key to solving disappearance case of Savannah's mom

-

Earth’s oceans ruled by aliens? Former US Admiral’s explosive claims sparks UFO debate