AI vs EU: ChatGPT upends Brussels' intentions for regulation

EU must now go back to drawing board to figure out how to effectively regulate AI

How do you protect people using new technology when it can radically change from one day to the next?

That's the riddle the EU faces as it races to regulate artificial intelligence.

AI is in wide use, but the conversational robot ChatGPT has transformed how people view the technology — and how regulators should monitor it to protect against risks.

Created by US startup OpenAI, ChatGPT appeared in November and was quickly seized upon by users amazed at its ability to answer difficult questions clearly, write sonnets or code and provide information on loaded issues.

ChatGPT has even passed medical and legal exams set for human students, scoring high marks.

But the technology also comes with many risks as its learning system and similar competitor models are integrated into commercial applications.

The European Union was already deeply into the process of creating an online regulatory framework, and now must go back to the drawing board to figure out how to effectively regulate AI.

The European Commission, the EU's executive arm, first announced a plan in April 2021 for an AI rulebook, and the European Parliament hopes to finalise its preferred AI Act text this month.

The EU industry commissioner, Thierry Breton, said MEPs, the commission and member states are working together to "further clarify the rules" on ChatGPT-type tech — known as general purpose AI — systems that have a vast range of functions.

Opportunities v risks

Social media users have had fun experimenting with ChatGPT output, but it's not a game. Teachers fear students will use it to cheat, and policymakers fear it will be used to spread misinformation.

"As showcased by ChatGPT, AI solutions can offer great opportunities for businesses and citizens, but can also pose risks," Breton has said.

"This is why we need a solid regulatory framework to ensure trustworthy AI based on high-quality data."

The plan is for the European Commission, the European Council, which represents the 27 member states, and the parliament to discuss a final version of the AI act from April.

Dragos Tudorache, the MEP overseeing the push to get the AI Act through parliament, said ChatGPT was one the publicly known, news-making example of general-purpose AI and various derivatives.

Using what is known as a "large language model", ChatGPT is an example of generative AI that — operating unguided — can create reams of original content, including images and text, by mining past data.

"We will indeed propose a set of rules to govern general-purpose AI, and foundational models in particular," said Tudorache, a Romanian MEP.

Last week, Tudorache and Italian MEP Brando Benifei presented fellow legislators with a plan to impose more obligations on general-purpose AI, a text which did not feature in the commission's original proposal.

Some experts complain the risks from such systems like ChatGPT were always clear and the warnings were shared with EU officials as work started on the AI Act.

"Our recommendation back then was that we should also regulate AI systems that have a range of uses," said Kris Shrishak, technology fellow at the Irish Council for Civil Liberties.

But he said it was also just as important to identify the risks from generative AI systems once they are deployed.

'Good foundation'

Shrishak said the act's effectiveness would depend on the final draft but that it "lays a good foundation. It does have certain mechanisms to identify new risks".

A more pressing issue would be enforcement, he warned, adding that the parliament was working towards strengthening this aspect.

"The regulation is just a piece of paper if it's not enforced," Shrishak told AFP.

OpenAI CEO Sam Altman has suggested "major world governments" and "trusted international institutions" come together and produce a set of rules that explicitly say what the system should and should not do.

Asked by an AFP reporter to say how it would regulate itself, the ChatGPT engine said it would welcome "responsible and ethical regulation".

And it called for a law that "recognises the potential benefits of AI while also addressing the potential risks and challenges."

-

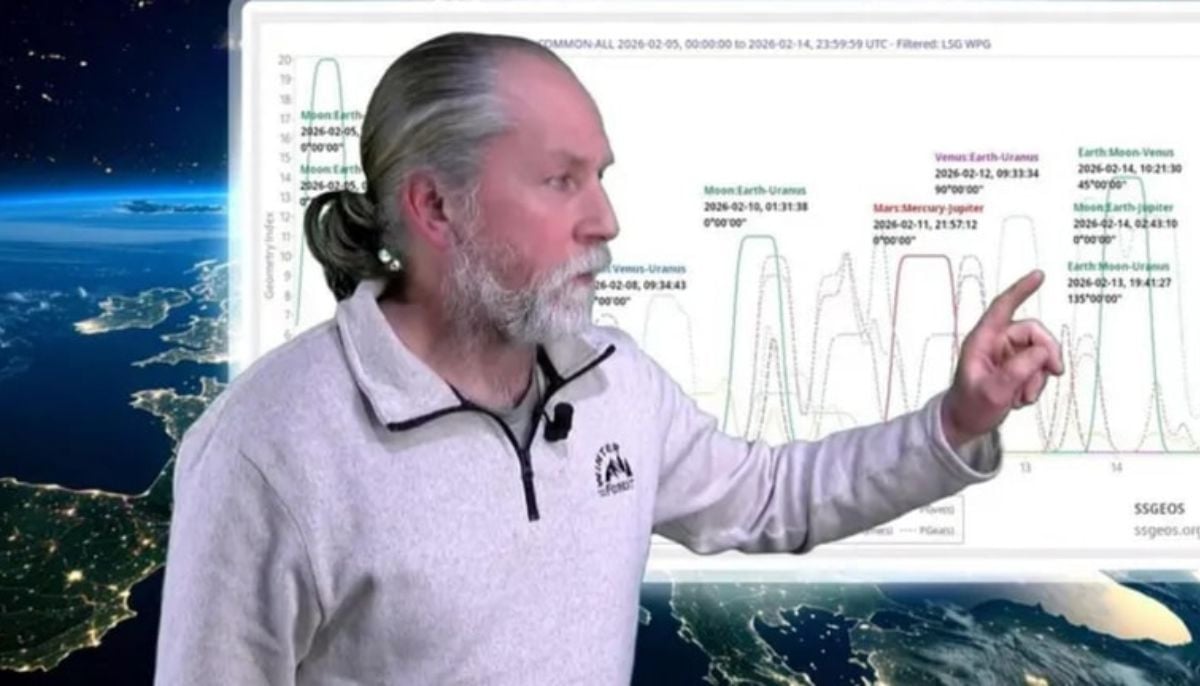

Dutch seismologist hints at 'surprise’ quake in coming days

-

SpaceX cleared for NASA Crew-12 launch after Falcon 9 review

-

Is dark matter real? New theory proposes it could be gravity behaving strangely

-

Shanghai Fusion ‘Artificial Sun’ achieves groundbreaking results with plasma control record

-

Polar vortex ‘exceptional’ disruption: Rare shift signals extreme February winter

-

Netherlands repatriates 3500-year-old Egyptian sculpture looted during Arab Spring

-

Archaeologists recreate 3,500-year-old Egyptian perfumes for modern museums

-

Smartphones in orbit? NASA’s Crew-12 and Artemis II missions to use latest mobile tech