AI breakthrough slashes quantum computing errors—study finds

The model successfully achieves microsecond-scale processing speeds without any further validation

Researchers at Harvard University have developed a neural- network- based decoder that could fundamentally change the timeline for viable quantum computing. By leveraging artificial intelligence, the team has identified a “waterfall” effect that slashes error rates and suggests that the massive qubit counts previously thought necessary for quantum "supremacy" may be overestimated.

Cracking the Quantum bottleneck

Quantum computers rely on qubits, which are incredibly powerful but notoriously fragile. They are highly sensitive to noise-interference in the environment-that causes calculation errors. To solve this, the system uses “error correction” to detect and fix mistakes in real time. The new AI system, a convolutional neural network called Cascade, targets this directly. According to the study published on the pre-print server arXiv, Cascade processed data up to 100,000 times faster than standard techniques and reduced error rates by factors of several thousand in benchmark tests.

The Waterfall discovery: A breakthrough in Quantum computing

Perhaps the most startling find is what researchers call the Waterfall effect. Traditional models assumed that error rates improved steadily as systems grew. However, the Harvard team found that once error rates drop below a certain threshold, they begin to fall much more steeply than predicted. The researchers report that Cascade’s single-shot latency-the time it takes to process one round of correction-is measured in millionths of a second. This speed is already compatible with several leading quantum platforms, including trapped-ion and neutral-atom systems. Despite the excitement, the team noted certain trade-offs.

Unlike traditional algorithms, AI-based decoders do not yet have the same theoretical guarantees and rely heavily on the quality of their training data. Additionally, smaller AI models performed poorly, meaning high-performance decoding requires significant computational power. Nonetheless, the finding suggests that quantum computers may not need as many qubits as previously thought to reach useful performance.

-

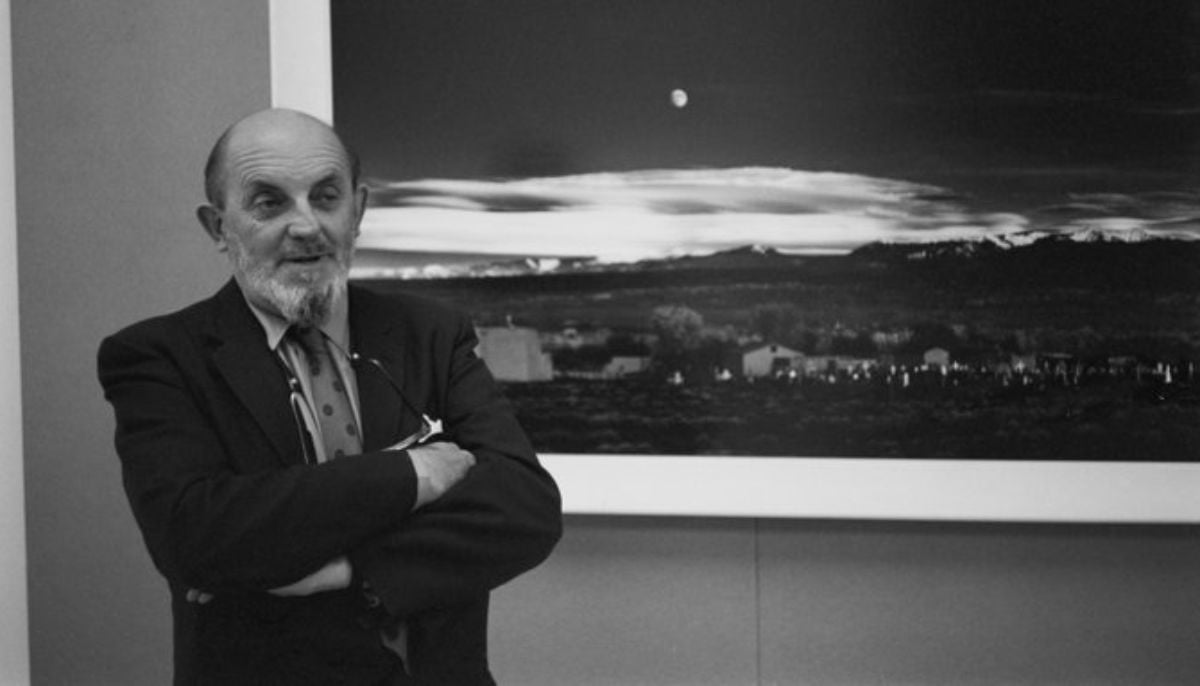

AI version of iconic ‘Moonrise’ photo sparks rights backlash

-

Nvidia AI chief reveals how to get past automated hiring systems

-

Fake CAPTCHA scam installs malware in seconds: Here’s how to stay safe

-

Google's AI bans artists' accounts with zero human review

-

‘Stop Hiring Humans’ billboard campaign sparks job loss fears

-

Musk sparks backlash as he calls Neuralink 'Jesus-level miracle'

-

First AI-generated feature film premieres at Cannes

-

China’s DeepSeek restructures pricing with a permanent 75% cut on V4-Pro AI model