Can AI protect classified data? US defence tests limits

AI data security issue creates a need for specific infrastructure providers to secure sensitive information

The artificial intelligence security challenge is deepening as US defence and intelligence agencies race to adopt AI tools without risking sensitive data leaks.

The issue has gained attention following tensions between Anthropic and the Pentagon, highlighting how governments are struggling to balance innovation with secrecy. As AI adoption expands, a new class of firms is stepping in to solve what experts call the AI secrecy problem.

Is data safe with AI?

The AI security issue has created a need for specific infrastructure providers. Such companies develop infrastructure that helps organisations utilise AI without having to disclose any sensitive data. According to Ask Sage founder Nicolas Chaillan, the industry is currently valued at approximately $2 billion.

Companies such as Amazon Web Services and Palantir offer secure cloud-based and software-based infrastructure for AI models. The contribution of these firms cannot be overemphasised because they enable defence agencies to utilise AI tools within their classified networks.

The main issue within the realm of AI security involves a compromise. AI tools require massive amounts of data in order to function effectively; however, feeding them sensitive data raises the likelihood of breaches. Specialists have indicated that the absence of adequate protection could lead to the revelation of sensitive information by AI tools.

According to the Center for Strategic and International Studies’ researcher Emily Harding, there is a Catch-22 problem in this regard. Excessive data poses security threats, while insufficient data compromises the efficiency of AI tools.

To solve the problem, companies are adopting approaches such as Retrival Augmented Generation, which permits an AI model to access data without necessarily storing it. Such an approach works like a secure room, where data retrieval only happens when needed.

Unstructured, the CEO, Brian Raymond, mentioned that such an approach helps maintain strict access controls. Analysts can only retrieve data based on their job function and avoid any leakages.

Additionally, the US Department of Defence has come up with its own AI platform known as GenAI.mil to boost the use of AI within different agencies. Despite efforts to boost AI usage, the AI security problem is still not solved because of GenAI. MIL only handles unclassified tasks.

-

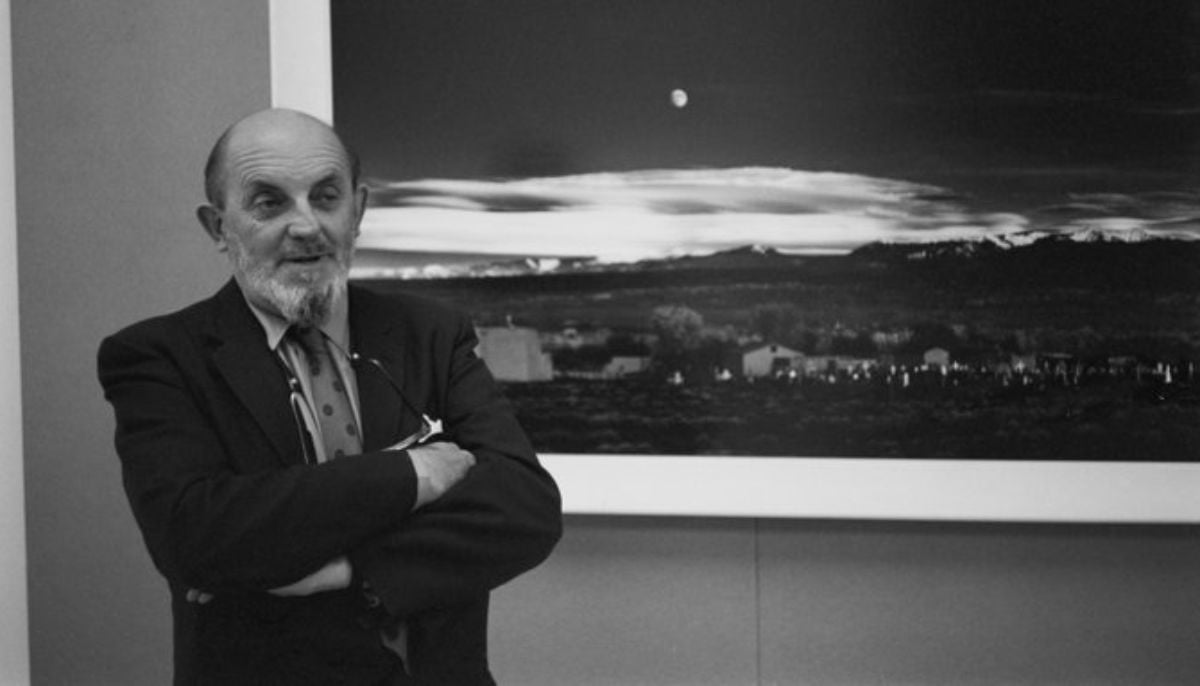

AI version of iconic ‘Moonrise’ photo sparks rights backlash

-

Nvidia AI chief reveals how to get past automated hiring systems

-

Fake CAPTCHA scam installs malware in seconds: Here’s how to stay safe

-

Google's AI bans artists' accounts with zero human review

-

‘Stop Hiring Humans’ billboard campaign sparks job loss fears

-

Musk sparks backlash as he calls Neuralink 'Jesus-level miracle'

-

First AI-generated feature film premieres at Cannes

-

China’s DeepSeek restructures pricing with a permanent 75% cut on V4-Pro AI model