OpenAI launches GPT-4o: Important features to know

Sam Altman's company takes ChatGPT to next level

OpenAI just launched the GPT-4o, an iteration of its GPT-4 model that is known for powering its hallmark product, ChatGPT.

The latest update "is much faster" and improves “capabilities across text, vision, and audio,” OpenAI CTO Mira Murati said in a livestream announcement on Monday, according to The Verge.

It is set to be free for all users, and paid users will continue to “have up to five times the capacity limits" of free users, Murati added.

OpenAI says in a blog post from the company that GPT-4o’s capabilities “will be rolled out iteratively (with extended red team access starting today),” but its text and image capabilities will start to release today in ChatGPT.

Moreover, OpenAI CEO Sam Altman posted that the model is “natively multimodal”. This means that the model could generate content or understand commands in voice, text, or images.

“Developers who want to tinker with GPT-4o will have access to the API, which is half the price and twice as fast as GPT-4-turbo,” Altman added on X.

The features bring speech and video to all users, either free or paid, and will be rolled out over the next few weeks. The important key point is just what a difference using voice and video to interact with ChatGPT-4o brings.

The changes, OpenAI told viewers on the live-stream, are aimed at “reducing the friction” between “humans and machines”, and “bringing AI to everyone”.

-

Can we finally find aliens? Scientists reveal a surprising new ‘organizational’ approach

-

Study reveals how to tell real alien life from chemical fakes

-

Scientists find hidden third ancestral group in Japanese genomes

-

SpaceX ‘Space Junk’ is on a collision course with the Moon, scientists say

-

Do you know what happened on May 10, 1967? NASA's M2-F2 disaster explained

-

Why the Southern Ocean is melting: Antarctica’s sea ice resilience reaches a breaking point

-

Giant black holes are cosmic ‘Frankensteins’ built by mergers, new study reveals

-

NASA’s Artemis 2 moon launch becomes the largest event in Space Coast history

-

Is success written in your DNA? New study reignites nature vs nurture debate

-

Researchers found 240-million-year-old giant mysterious 'sand creeper'

-

New solar-powered process turns plastic waste into clean hydrogen

-

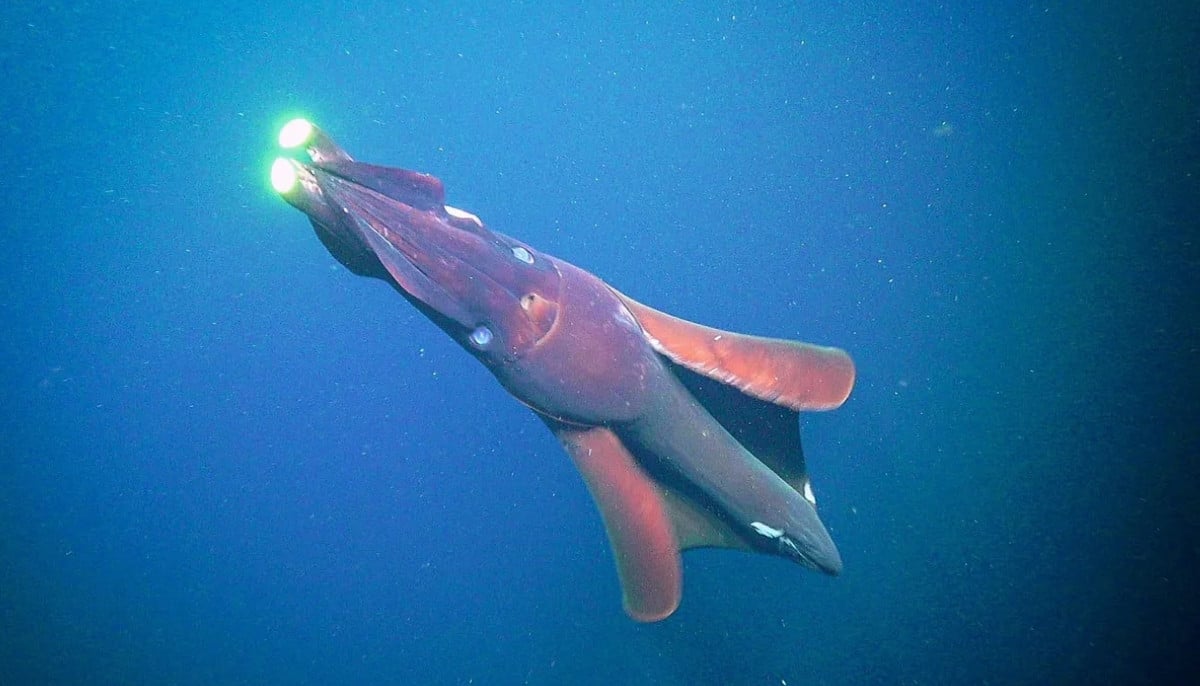

Giant squid detected off Western Australia coast as deep-sea study reveals hidden species