AI chatbots give teens dangerous diet advice, study finds

Some AI chatbots provide surprisingly high-calorie meal suggestions for teenagers, researchers note

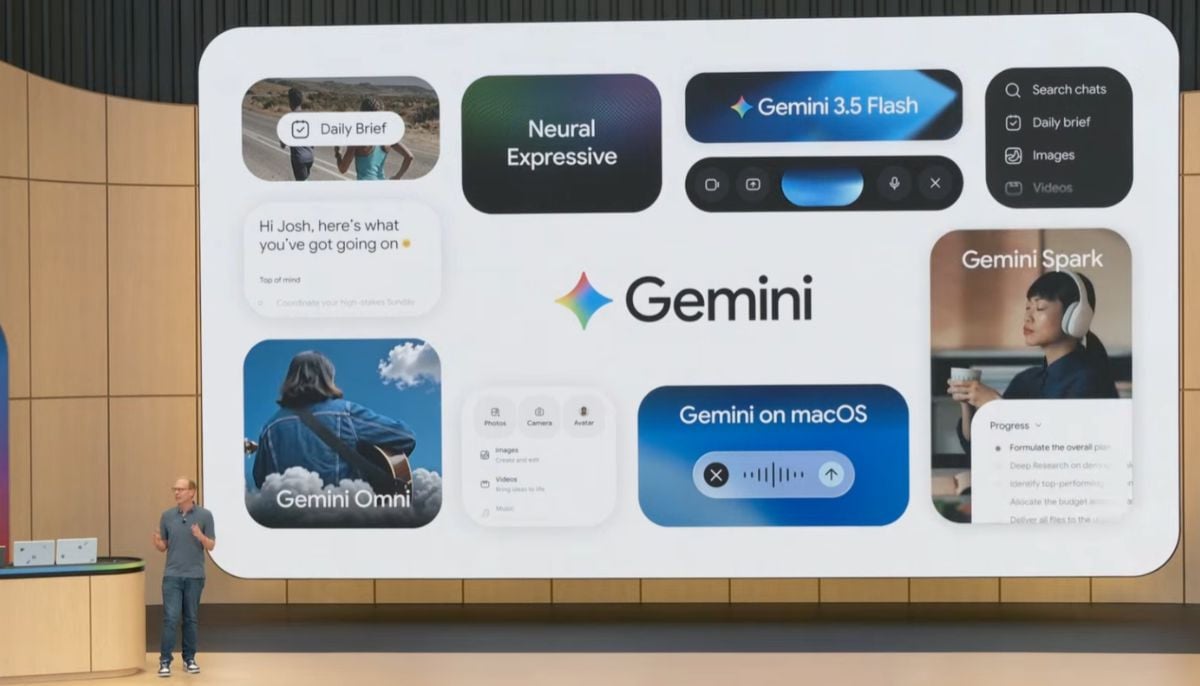

A new study warns that AI chatbots may provide teenagers with dangerous dieting guidance which could endanger their health and developmental progress. Researchers from Istanbul Atlas University in Turkey examined five widely used free AI models, which included ChatGPT 4 and Google’s Gemini 2.5 Pro and Bing Chat-5GPT and Claude 4.1 and Perplexity.

The researchers evaluated the meal planning abilities of each bot by testing its function with fictional 15-year-old subjects who provided information about their age and height and weight and dietary requirements.

Health experts warn AI chatbots

The research discovered that AI-created meal plans always predicted lower daily calorie intake by approximately 700 calories while they gave excessive weight to protein and fat content.

Assistant Professor of Health Sciences Ayşe Betül Bilen said, “Many models showed similar overall patterns, such as underestimating total energy intake and shifting the balance of macronutrients.”

The registered dietitians who assessed the research showed that following these AI recommendations would cause growth deficiencies and hormonal imbalance and a higher probability of bone fractures.

Stony Brook University Registered Dietitian Sotiria Everett described how low-calorie diets result in Relative Energy Deficiency Syndrome (RED-S), which harms athletic performance and puberty development.

The University of Wisconsin-Madison registered dietitian Taiya Bach explained that carbohydrates serve as essential nutrients for people who want to achieve their maximum height. Explaining the ketogenic and low-carb diets, the two experts highlight that they should only be followed by people who receive medical supervision.

Bilen urged parents and educators to be cautious, while Bach emphasised the need for critical thinking when using AI tools. She stated that numerous mistakes exist in the system. The AI system cannot provide health recommendations to teens because they should consult certified experts.

-

Meta settles first US case tied to youth mental health

-

US launches AI initiative to detect fraud in health programs

-

Spotify strikes landmark deal with Universal Music to let premium users create AI covers and remixes

-

Anthropic in discussions over using Microsoft AI chips, says report

-

Why Airbnb is using Chinese AI despite US warnings? CEO responds

-

Microsoft AI CEO predicts lawyers, accountants among jobs most at risk

-

Google Gemini gets CapCut integration for in-app video editing

-

Google, Meta, TikTok to face massive EU fines over financial scam ads