How AI can read your thoughts without you speaking?

Stanford and Japan studies show AI can translate brain activity into text and images in real time

The power of artificial intelligence besides doing your tasks over prompts and commands can now read and interpret your brain activity. Stanford University's recent study showcases 52-year-old woman who had been paralyzed after a stroke was able to read text on screen interpreting her thoughts.

Researchers tested this experiment on her by implanting microelectrode arrays in her brain to capture neural signals, which AI then translated into words.The study included other participants who had Amyotrophic lateral sclerosis (ALS) and was able to show the power of brain-computer interfaces (BCIs).

Earlier methods to discover more about the condition required pateints to speak, however, the BCIs utilising artificial intelligence recognise the brain's signal patterns associated with speech.

Stanford's research team found that they could decode "inner speech" simply by capturing sentences imagined silently in the brain with up to 74% accuracy in structured tasks. These systems translate brain activity into text or spoken words, which include non-verbal aspects of speech such as pitch and rhythm.

In addition to speech, AI technology has been able to reconstruct images and sounds through brain scans. A study carried out in Japan and another in Israel involved fMRI scans and AI image generators.

The researchers used listening data that their subjects provided to determine which musical pieces their subjects had listened to. The research findings show how people perceive things and establish new methods that can be used in medicine and human-computer interaction.

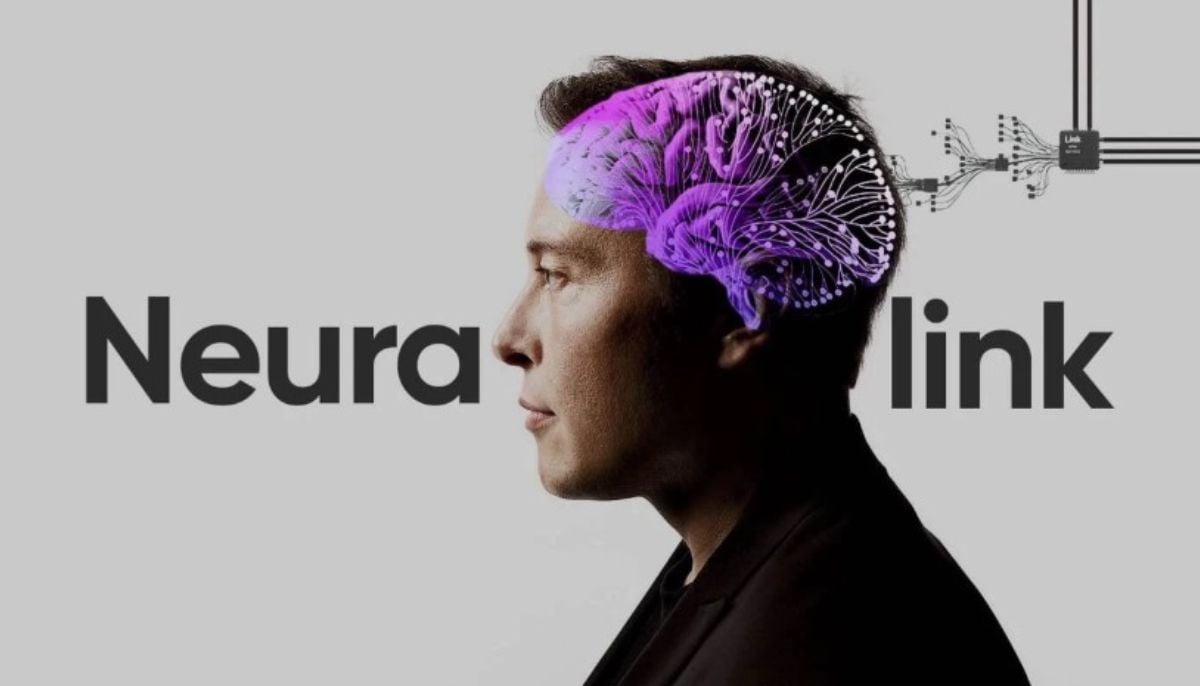

University of California Davis's Maitreyee Wairagkar and other experts predict that brain chips will soon become commercially available because companies like Neuralink drive their development. Microelectrode array technology will enable AI systems to handle increased numbers of neurones, which results in the capacity to transmit data through direct real-time connections.

Researchers are studying different brain areas because they want to find out how people with damaged brain sections produce inner speech, which might enable stroke patients and others with speech difficulties to speak more normally.

-

AI version of iconic ‘Moonrise’ photo sparks rights backlash

-

Nvidia AI chief reveals how to get past automated hiring systems

-

Fake CAPTCHA scam installs malware in seconds: Here’s how to stay safe

-

Google's AI bans artists' accounts with zero human review

-

‘Stop Hiring Humans’ billboard campaign sparks job loss fears

-

Musk sparks backlash as he calls Neuralink 'Jesus-level miracle'

-

First AI-generated feature film premieres at Cannes

-

China’s DeepSeek restructures pricing with a permanent 75% cut on V4-Pro AI model