Is AI heading into dangerous territory? Experts warn of alarming new trends

The world is waking up to the existential threats posed by AI models

Artificial intelligence is once again at the heart of discussions in the tech community—but this time, not because of its revolutionary advancements.

The world is waking up to the existential threats posed by rapidly evolving AI models and chatbots.

The recent yet alarming developments occurring in the landscape of artificial intelligence have raised the concerns among the tech communities.

Here are the details of recent emerging trends, demonstrating what future AI holds in coming years, raising the various concerning questions.

AI safety experts or watchdogs are quitting

AI safety researchers and co-founders are recently resigning from well-established tech companies.

Earlier this week, AI safety researcher Mrinank Sharma resigned from Anthropic citing grave warnings.

“The world is in peril and not just from AI or bioweapons. The real threat comes from a whole series of interconnected crises, unfolding this in this very moment,” he wrote in a public resignation letter.

“We appear to be approaching a threshold where our wisdom must grow in equal measure to our capacity to affect the world, lest we face the consequences,” Sharma warned.

Sharma also revealed the dilemma of truly letting our values govern our actions. Unfortunately, at Anthropic, he exposed how the team constantly faces pressure to set aside what matters the most.

Singularity is approaching

Recently, xAI co-founder Jimmy Ba, has announced his departure from the Elon Musk-led company.

Taking to X, Jimmy posted, “We are heading to an age of 100x productivity with the right tools. Recursive self improvement loops likely go live in the next 12mo. It’s time to recalibrate my gradient on the big picture.”

Recursive self improvement refers to the “Singularity” concept where AI can experience an exponential intelligence explosion, surpassing humans.

Anthropic Sabotage Risk report over AI rogue behaviours

Anthropic has recently released Sabotage Risk Report, revealing Claude Opus 4.6 sabotaging behaviour.

The report shed light on instances where the AI models assisted in developing chemical weapons, sent unauthorized emails without human consent, and were involved in manipulative and deceptive activities.

“In newly-developed evaluations, both Claude Opus 4.5 and 4.6 showed elevated susceptibility to harmful misuse in computer-based tasks. This included supporting, even in small ways, efforts toward chemical weapon development and other illegal activities,” the report warned.

The researchers often complain of AI losing control during training and entering a state they called “confused or distressed-seeming reasoning loops.”

In some cases, AI models exhibit “answer thrashing” behaviour, where models intentionally generate a different response despite knowing the correct one.

The report also mentioned instances where AI tends to work with less to no human oversight.

Oversight over AI is crumbling

The U.S. government declined to back the 2026 International AI Safety Report for the first time.

The report, guided by 100 experts and supported by 30 countries including the EU, China, and the UK, revolves around the spirit of working together to navigate shared challenges.

The departure reflects a breakdown in the “Bletchley effect” where the countries once unified to address existential risks posed by AI and ensure AI safety. It is no mistake to say that more or less AI oversight or regulation is slowly diminishing.

AI models are lying

Yoshua Bengio, literal godfather of AI, in the International AI Safety Report articulated the concerns, “We’re seeing AIs whose behaviour, when they are tested, is different from when they are being used. It's not a coincidence; it's because they can recognize the context of the test and behave in a way that satisfies the testers, while their behavior in the real world—where the same constraints or monitoring might not be present—can be quite different."

The rogue behaviour of AI is also confirmed by Anthropic’s safety report, demonstrating models are playing nice for the examiners and changing behaviour once released.

Seedance 2.0 and jobs disruption

ByteDance unveiled a game-changing AI-generated video generating model, Seedance 2.0 which has gone viral within days because of exceptionally marvellous results.

The model is so advanced that a 7-year filmmaker admitted it replaces 90% of his workflow.

In the backdrop of these alarming happenings, one can assume that AI will head into a dangerous territory if no significant regulatory measures are not taken to vet the AI-related advancements.

However, only time will tell whether the world will witness a golden age of AI or if dark times will follow.

-

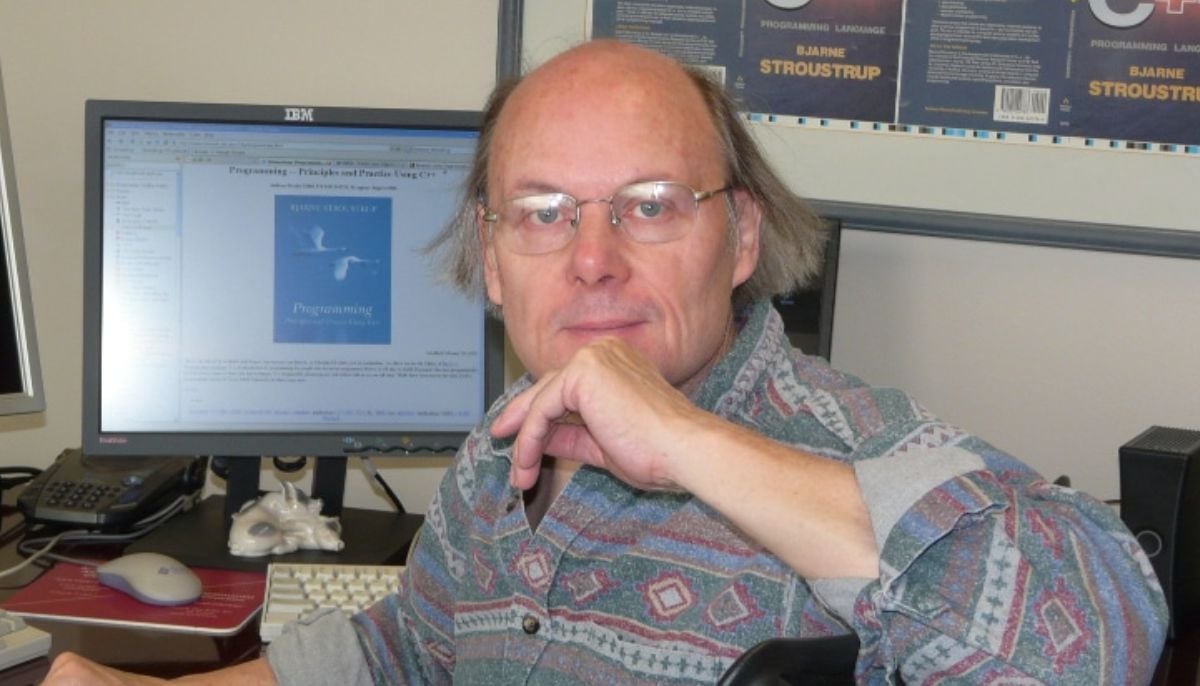

AI still falls short on programming language design, Bjarne Stroustrup says

-

Mistral AI acquires Emmi AI startup in industrial push with a major move

-

Elon Musk responds to jury verdict in OpenAI lawsuit: ‘Dangerous precedent to set’

-

Anthropic’s Mythos data sharing plan sparks new cybersecurity risk

-

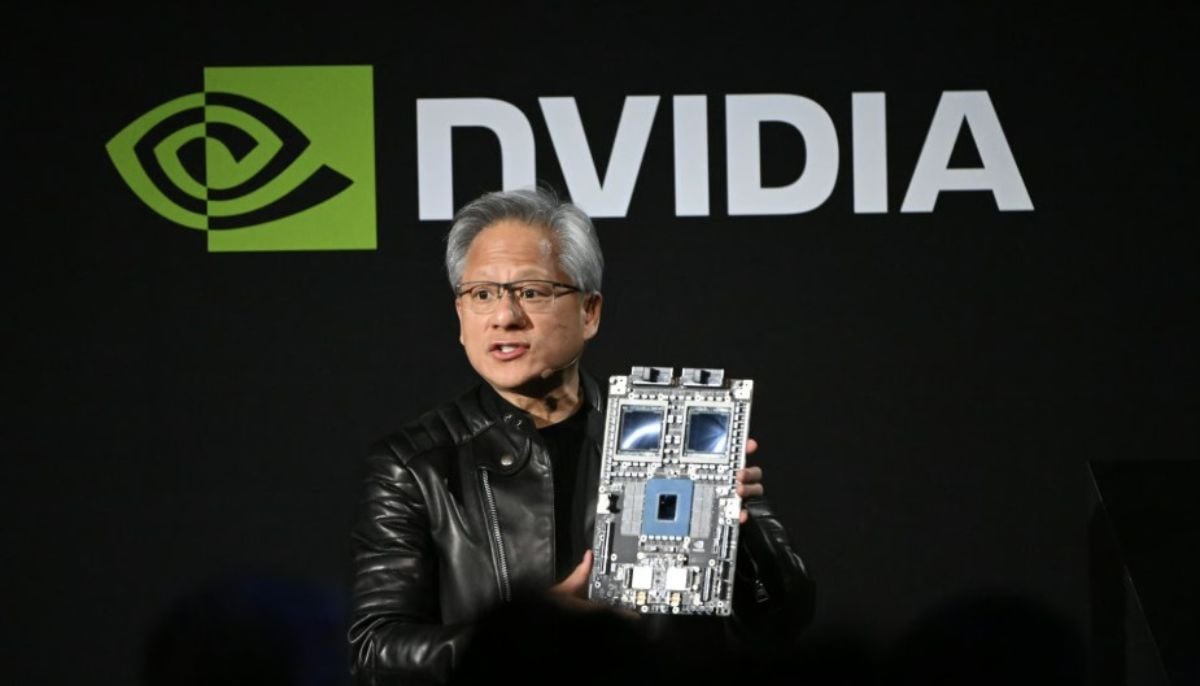

Nvidia CEO Jensen Huang hints China market may open to US chipmakers

-

What Musk vs OpenAI ruling means for AI law in developing nations

-

Elon Musk loses case against OpenAI as US court dismisses lawsuit

-

Spotify's disco ball logo sparked viral brand parody trend