Open-source AI models without guardrails vulnerable to criminal misuse, researchers warn

An alert has been issued regarding the vulnerability of the open-source Large Language Models to criminal exploitation

Cybersecurity researchers from SentinelOne and Censys have reportedly issued a warning regarding the susceptibility of open-source Large Language Models (LLMS) to criminal exploitation. The research underscores the dangers of growing ecosystems where AI models are being stripped of safety guardrails. By removing the constraints of these artificial platforms, users are creating significant security risks.

Researchers warn that hackers could target computers running the LLMs, directing them to carry out spam operations or disinformation campaigns while bypassing security measures.

A large variety of open-source LLM variants exist: a substantial number of those LLMs on the internet-accessible hosts are variants of Meta’s Llama and Google DeepMind’s Gemma. Researchers have specifically scrutinized hundreds of instances where guardrails have been completely removed.

In this regard, Juan Andres Guerrero-Saade, executive director for intelligence and security research at SentineIOne said: “AI industry conversation about security controls is ignoring this kind of surplus capacity that is clearly being utilized for all kinds of different stuff, some of it legitimate and some obviously criminal.”

The analysis enabled researchers to observe system prompts, providing direct insight into model behavior. They demonstrated that 7.5% of these prompts could potentially cause significant damages.

Furthermore, it was observed that 30% of the hosts operate out of China, while approximately 20% are based in the US.

Following the recent remarks, a spokesperson for Meta declined to respond to certain questions about developers’ responsibility for addressing concerns related to downstream abuse of open-source models.

Microsoft AI Red Team Lead Ram Shankar Siva Kumar stated via email that while Microsoft plays a crucial role in various sectors, open models are simultaneously driving transformative technologies. The company is continuously monitoring emerging threats and improper applications.

Furthermore, the responsibility for safe innovation requires a collective commitment across creators, deployers, researchers, and security teams.

-

Mark Zuckerberg reassures employees, says 'no further company-wide layoffs' expected this year

-

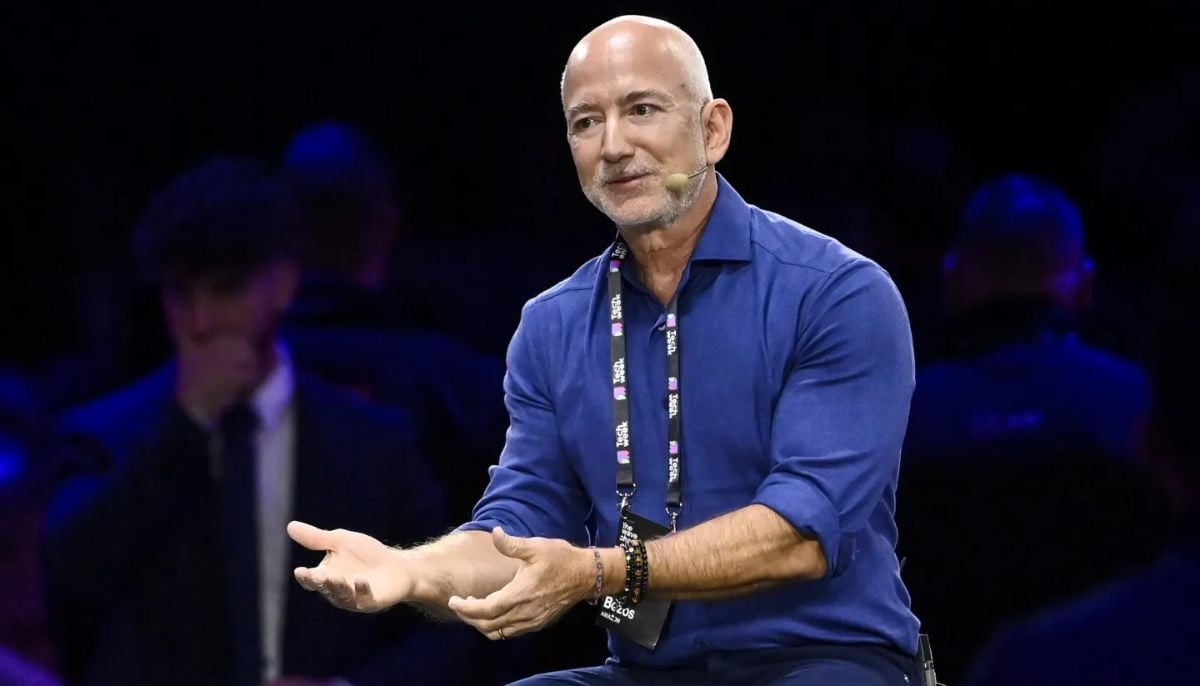

Is AI boom a bubble? Jeff Bezos has its surprising answer

-

Jeff Bezos says space data centres are 'very realistic', but not soon

-

Bristol Myers to deploy Anthropic’s Claude AI model to accelerate drug discovery

-

US groups demand Roblox investigation over child safety, ‘deceptive’ marketing practices

-

Zuckerberg leaked audio shows Meta used employees to train AI

-

OpenAI co-founder Andrej Karpathy joins Anthropic to lead AI research unit

-

AI layoffs are ‘dumb’, says Google DeepMind chief Demis Hassabis

-

Could AI create $500 trillion global economy? Elon Musk, Jensen Huang think so

-

GitHub probes alleged breach of 4,000 internal repositories

-

Why Singapore is urging financial firms to scale up AI for better jobs

-

Google reinvents search with AI in biggest update in 25 years: Is web traffic apocalypse coming?