Experts warn of dangerous health advice in Google AI Overviews

Health misinformation in Google AI summaries could delay diagnosis and treatments, experts say

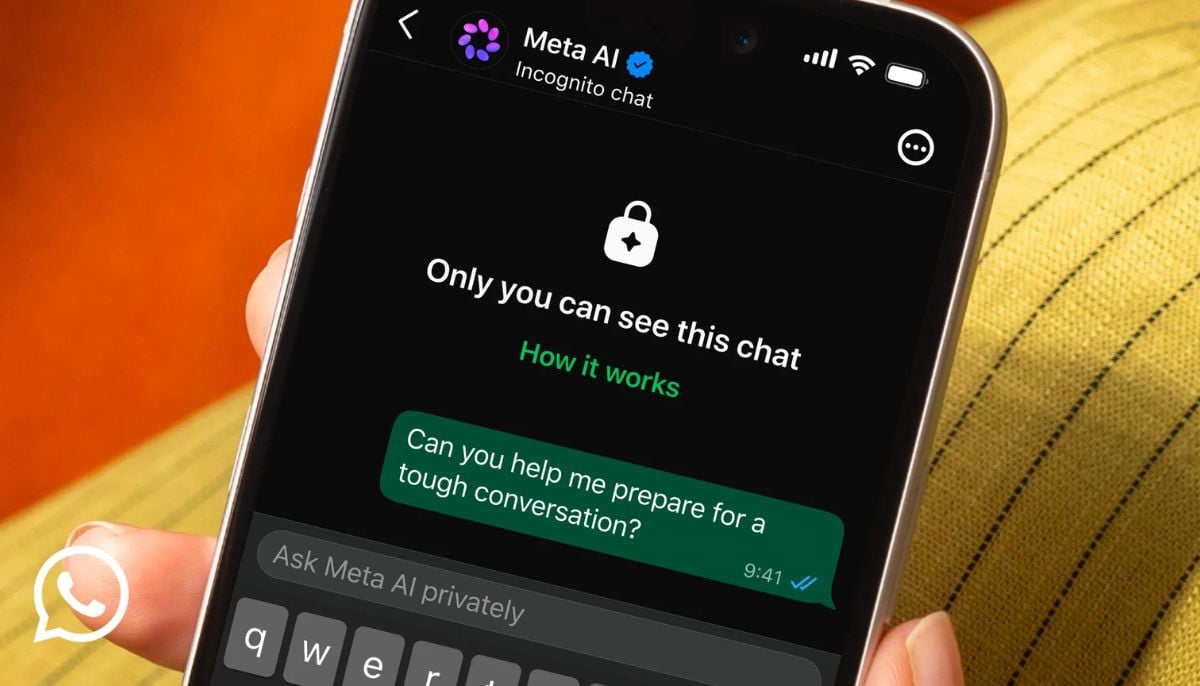

Concerns are mounting over Google AI Overviews providing health and advice, following investigations into false information that could risk patient safety.

These summaries positioned at the top of search results, are generated by AI but often appear as authoritative medical facts.

The investigation identified multiple cases where inaccurate Google AI summaries provided medical advice. Examples included incorrect guidance for pancreatic cancer patients, misleading explanations for liver blood results, and false information about women’s cancer screening.

Warnings have been issued that such errors could lead people to dismiss symptoms resulting in delays in treatments or the following of harmful advice.

Furthermore, there are notable inconsistencies, with the same health queries producing different AI-generated answers at distinct times.

This variability undermines trust and elevates the risk that misinformation will influence health decisions.

AI summaries appear at the top of the pages so users are less likely to scroll down to verified sources.

Additionally, health organizations have issued warnings that the feature frequently provides inaccurate information in the most visible sections of search results.

-

Anthropic, Gates Foundation collaborates to expand AI partnership in health education sector

-

OpenAI reviews antitrust action against Apple; Claims report

-

Anthropic overtakes OpenAI in business AI adoption

-

Tencent, Alibaba turn to local AI chips as Nvidia uncertainty grows

-

Microsoft faces UK antitrust probe over business software practices

-

Google unveils Googlebook: Here’s everything you need to know

-

Halupedia explained: Why AI Wikipedia clone is raising red flags

-

Who shapes AI’s answers? Ex-Meta news chief raises concerns