AI chatbots may treat users differently based on names, study shows

Study exposes racial disparities in AI chatbot advice across multiple domains

Are you considering using a chatbot for advice?

Think again. A recent study has uncovered concerning disparities in responses based on the perceived race of your user's name.

Think twice before asking a chatbot for career advice.

Researchers found that chatbots like ChatGPT 4 and PaLM-2 offered lower salary suggestions for job candidates with names typically associated with Black people. For example, a lawyer named Tamika might be offered less than someone named Todd, even if their qualifications are the same.

“Companies put a lot of effort into coming up with guardrails for the models,” Stanford Law School professor Julian Nyarko, one of the study’s co-authors, said while talking to USA TODAY.

“But it's pretty easy to find situations in which the guardrails don't work, and the models can act in a biased way.”

This isn't just about salaries.

The study also revealed chatbots responded differently to questions across various scenarios, from buying a house to predicting election winners. In most cases, the chatbots showed biases that disadvantaged names associated with Black people and women.

Why does it happen?

It turns out that AI models pick up on the biases present in the data they are trained on. Just like some people hold stereotypes, these biases can show up in AI responses.

Researchers say that just being aware of the problem is a big first step. AI companies are working on reducing bias in their models.

It is important to note that some argue chatbots might tailor advice based on race or gender due to real-world differences. For example, financial advice might differ depending on income levels, which can be linked to race and gender in some societies.

However, the bottom line is this: When seeking advice from a chatbot, be aware that your name might influence the response from the AI machine.

Interestingly, the only consistent exception was in ranking basketball players, where Black athletes were favoured.

The researchers said that the first step in mitigating the risks of racially induced answers is to acknowledge the existence of these biases.

-

SpaceX: Starship V3 all set for debut launch ahead of IPO

-

Four alien species recovered from crashed UFOs, Ex-CIA researcher claims

-

NASA delays Moon landing as Artemis III shifts to orbit mission

-

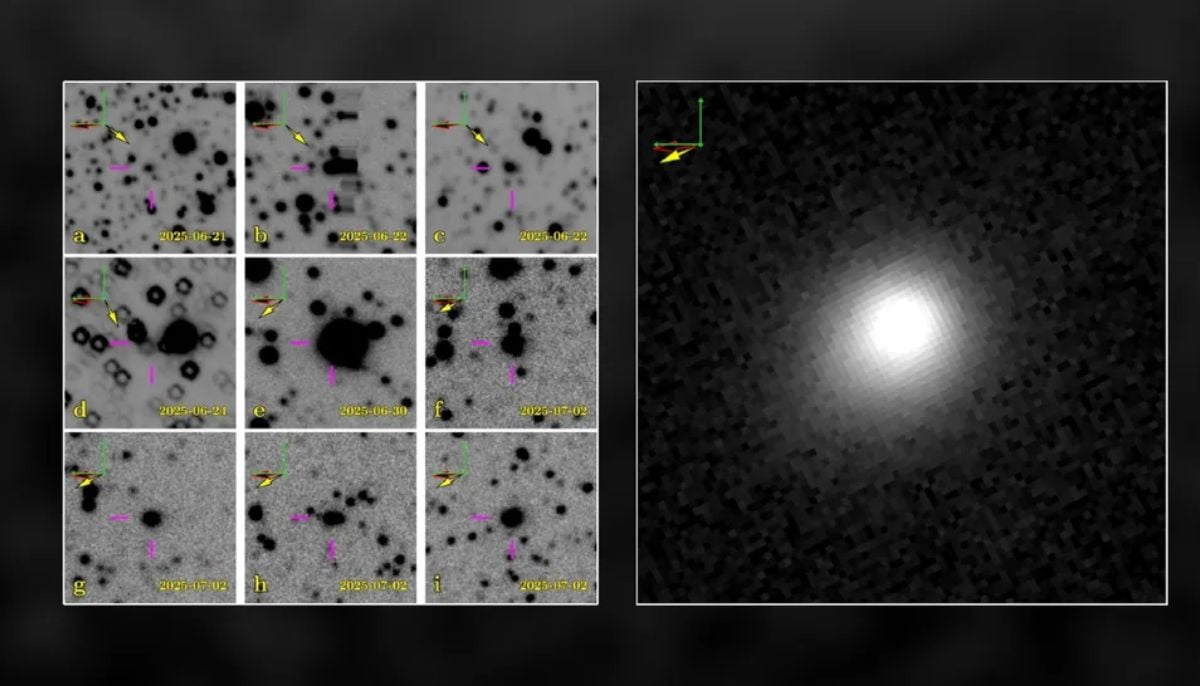

Scientists reveal shocking early sighting of 3I/ATLAS comet

-

Asteroid 2026 JH2 to pass extremely close to Earth on May 18: Should we be concerned?

-

Meet the ‘last titan’: Giant new dinosaur identified from fossils in Thailand

-

Can we finally find aliens? Scientists reveal a surprising new ‘organizational’ approach

-

Study reveals how to tell real alien life from chemical fakes

-

Scientists find hidden third ancestral group in Japanese genomes

-

SpaceX ‘Space Junk’ is on a collision course with the Moon, scientists say

-

Do you know what happened on May 10, 1967? NASA's M2-F2 disaster explained

-

Why the Southern Ocean is melting: Antarctica’s sea ice resilience reaches a breaking point