OpenAI rolls out three new audio models for real-time voice tasks

OpenAI's new models GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper,will improve speech recognition, voice generation, and real-time conversational capabilities for AI-powered assistants and applications

OpenAI introduced three audio models for its developer platform on Thursday. The model aims to make voice-based software agents more conversational and capable of completing tasks in real time.

The launch comes as competition in AI-powered voice technology intensifies, with major technology firms investing heavily in multimodal systems that combine text, audio, and visual understanding.

The rollout is aimed at developers building conversational AI tools, virtual assistants, customer service platforms, and other voice-enabled applications.

Additionally, the launch of the application programming interface API moves the ChatGPT-maker beyond transcription and chat toward agents that can listen, translate, and act during live conversations.

OpenAI's new audio models: GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper

The new models are GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper. OpenAI said they are available to test in its developer playground.

GPT-Realtime-2 is designed to manage harder requests, call tools, handle interruptions, and maintain context across longer voice sessions.

The second model supports translation from more than 70 languages into 13 output languages, targeting customer support, education, and other settings.

GPT-Realtime-Whisper provides live speech-to-text, allowing captions, meeting notes, and workflow updates to be generated as a speaker talks.

Customers testing the models include online real estate marketplace Zillow, online travel agency Priceline, and European telecommunications firm Deutsche Telekom.

Pricing for GPT-Realtime-2 starts at $32 per million audio input tokens, GPT-Realtime-Translate costs $0.034 per minute, and GPT-Realtime-Whisper costs $0.017 per minute.

The company said the models are designed to deliver more natural speech, improved accuracy, and lower latency during live interactions.

-

US senator probes TikTok, Oracle-linked joint venture over data security concerns

-

Meta’s employee tracking tool sparks EU privacy concerns over AI training use

-

Qualcomm rolls out AI chip for low-cost Windows PCs

-

iPhone 18 Pro dummy units reveal 4 new colour options

-

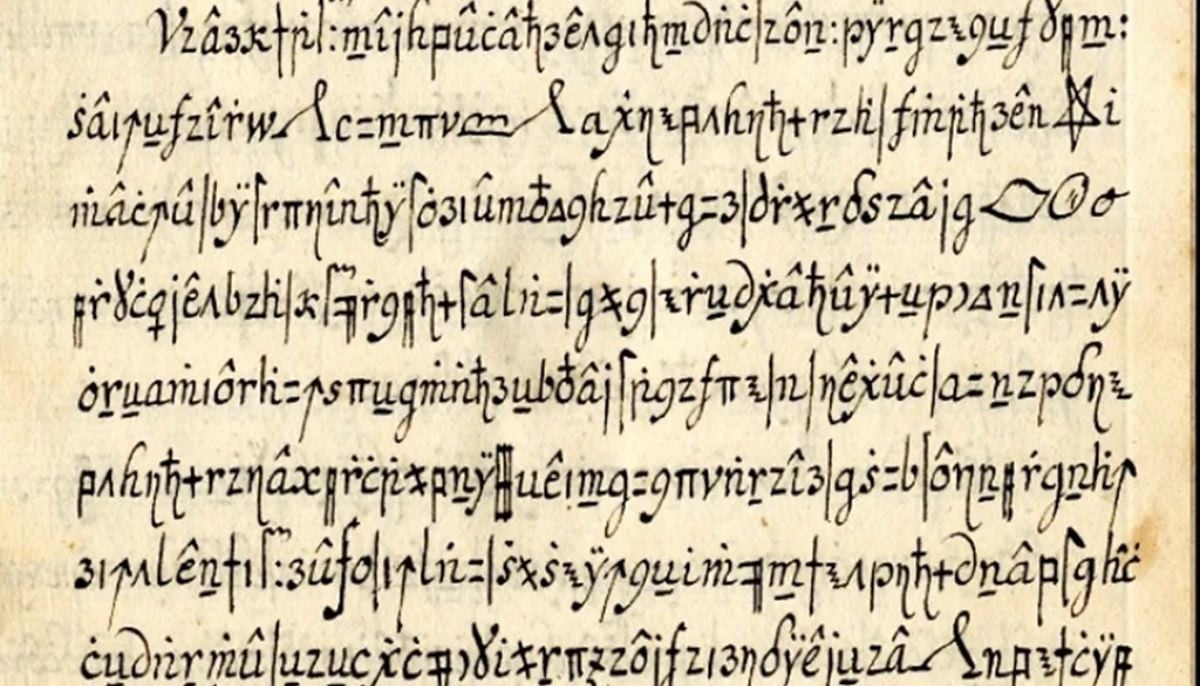

AI decoded 400-year-old Vatican cipher in 29 minutes flat

-

Court ruling on Google advertising tools may transform online ads

-

LinkedIn founder predicts next AI boom after chatbots: Here’s what comes next

-

UN issues landmark global guidelines for child online safety