New AI tool targets extremism, redirects ChatGPT users to real-world help

The initiative will help AI companies to tackle growing safety concerns stemming from misuse of chatbots

ThroughLine, a New Zealand-based startup that already provides crisis redirection for OpenAI, Google, and Anthropic, is developing a new tool to identify and intervene when users display violent extremist tendencies.

The project, supported by advice from The Christchurch Call, is designed to provide a “hybrid response” by integrating specialized chatbot interactions with referrals to real-world mental health and de-redicalization services.

The recent initiative aims to address growing safety concerns driven by the use of chatbots in planning violent attacks. Consequently, big AI companies are facing multiple lawsuits, in which tech giants are accused of failing to prevent or even enable violence.

In February, OpenAI was under fire when the company revealed that the perpetrator of the Canadian deadly school shooting used ChatGPT to carry out nefarious plans.

Resultantly, the Canadian government threatened to intervene against OpenAI after the company revealed it had banned a school shooter from its platform without first alerting law enforcement.

Mechanism of intervention

When an AI detects signs of extremism, it will route the user to ThroughLine, giving access to human-run helplines and specialized intervention chatbot.

Unlike standard AI, this intervention tool will be trained by experts in counter-extremism rather than generic datasets to ensure safe and effective dialogue.

"We're not using the training data of a base LLM," the founder Elliot Taylor said, referring to the generic datasets large language model platforms use to form coherent text. "We're working with the correct experts. The technology is currently being tested, but no date has been set for release.”

Elliot also explained that it is not advisable to simple such types of users, if they would, the users would turn to unregulated platforms, thereby leading to more dangerous situations.

The tool combines automated support with a network of over 1,600 helplines across 180 countries.

According to Galen Lamphere-Englund, a counterterrorism adviser representing The Christchurch Call, beyond AI chatbots, rolling out the product for moderators of gaming forums and for parents and caregivers will be more productive.

OpenAI confirmed the relationship with ThroughLine. Anthropic and Google did not comment yet.

-

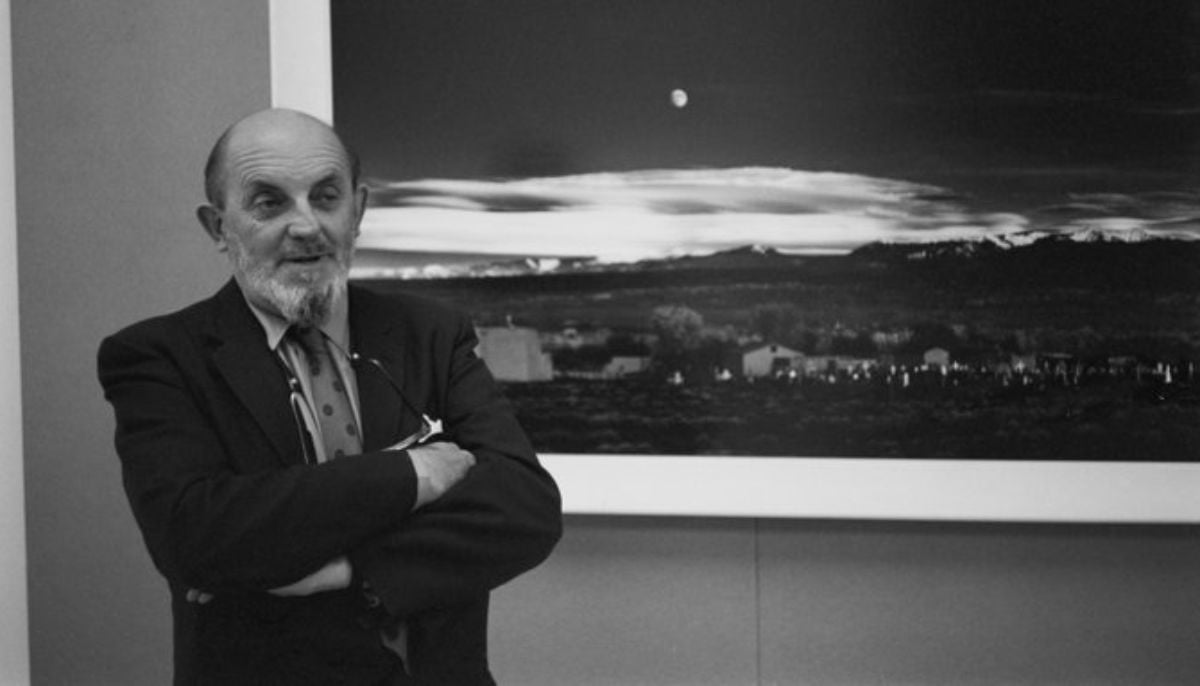

AI version of iconic ‘Moonrise’ photo sparks rights backlash

-

Nvidia AI chief reveals how to get past automated hiring systems

-

Fake CAPTCHA scam installs malware in seconds: Here’s how to stay safe

-

Google's AI bans artists' accounts with zero human review

-

‘Stop Hiring Humans’ billboard campaign sparks job loss fears

-

Musk sparks backlash as he calls Neuralink 'Jesus-level miracle'

-

First AI-generated feature film premieres at Cannes

-

China’s DeepSeek restructures pricing with a permanent 75% cut on V4-Pro AI model