Inside TikTok-Meta algorithm war: How the race for engagement is putting users at risk

The algorithms offered a path that maximizes profits at the expense of their audience's wellbeing

In the high-stakes arena of digital dominance, a silent war is being waged not with weapons but with codes and algorithms. In 2026, the “algorithm arms race” between TikTok and Meta has reached a fever pitch, transforming social media from a space for connections to an arena rivaling a battle for human attention.

In the midst of a competitive race to win users’ attention, safety is the first casualty, endangering the lives of millions by exposing them to harmful content.

As reported by BBC, social media giants prefer competition and profit by ignoring safety warnings and protocols, thereby leading to the normalization of violence, radicalization, misogyny, sexual abuse, and harassment.

While the companies publicly tout safety features, insiders suggest the internal reality is one of "paranoia" over stock prices and market share.

According to an engineer working at Meta, the parent company of Instagram and Facebook, who told how he had been instructed by senior management to allow more harmful content, including conspiracy theories, in users’ feeds to rival TikTok dominance and boost engagement.

Profit over safety

According to senior Meta researcher Matt Motyl, Meta launched Instagram reels in 2020 without sufficient guardrails. The internal research showed that Reels had flooded Instagram with high rates of violence, hate speech, and harassment.

The algorithm offered content creators a “path that maximizes profits at the expense of their audience's wellbeing and the current set of financial incentives our algorithms create does not appear to be aligned with our mission to bring the world closer together,” according to one internal study.

Prevalence of harm

According to one research paper that Motyl shared with the BBC, the Reels had significantly higher rates of harm compared to the main feed:

- 75 percent higher for bullying and harassment

- 19 percent higher for hate speech

- 7 percent higher for violence/incitement

TikTok’s political prioritization

A TikTok whistleblower revealed that cases involving politicians were often given higher priority than reports of child safety issues and sexual harassment to avoid regulatory threats or bans.

Real-world consequences

In addition to the whistleblowers’ shocking revelations, real-world examples also exist, demonstrating the harmful impacts of reels on the users.

According to Calum, a 19-year-old, the algorithms turned him into a radical person at the age of 14 by showing him misogynistic and racist content.

‘The videos energised me, but not really in a good way. They just made me very kind of angry. It very much reflected the way I felt internally, that I was angry at the people around me,” he said.

UK counter-terror police report a "normalization" of far-right and antisemitic content and posts, noting that users are becoming desensitized to real-world violence.

Rebuttals

TikTok’s spokesperson dismissed these claims as fabricated, stating the company has robust parallel review structures that do not jeopardize the child safety.

Meta also denied these claims and ensured users safety by rolling out strict policies and teen accounts with more parental control.

The company has made real changes to protect teens online, including introducing a new Teen Accounts feature with built-in protections and tools for parents to manage their teens' experience,” Meta said.

-

Research reveals AI is ‘not the main driver’ of US job slowdown

-

Elon Musk's ‘Instagram is for girls’ remark sparks platform debate

-

OpenAI partners with Malta to give nationwide access to ChatGPT Plus

-

Do Instagram DMs boost your reach? Instagram head says 'no'

-

Zuckerberg, Pichai called to testify on child safety concerns

-

Steve Jobs asked this one question before hiring anyone

-

Google redesigns 4,000 emojis with 3D look for Android 17

-

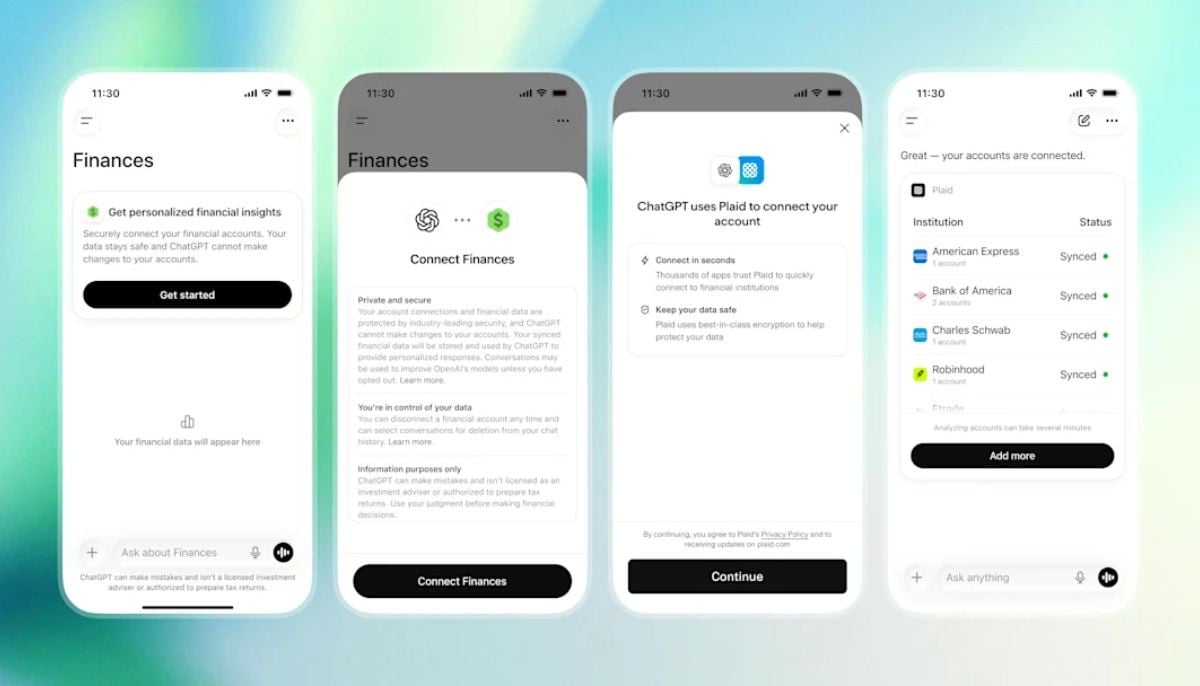

OpenAI rolls out ChatGPT finance tools with account linking