Apple reveals Eye Tracking, other new features for iPhone, iPad

Tim Cook's American tech giant aims to provide "best possible" experience to users

Apple Wednesday revealed new accessibility capabilities that will be available later in the year in which Eye Tracking is the most prominent one.

Eye Tracking enables people with physical limitations to operate an iPad or iPhone using their eyes.

In addition, more accessibility features will be added to visionOS; Vocal Shortcuts will enable users to complete tasks by creating their own unique sound; Vehicle Motion Cues will lessen motion sickness when using an iPhone or iPad while driving; and Music Haptics will provide a new way for users who are deaf or hard of hearing to experience music through the iPhone's Taptic Engine.

With the help of Apple silicon, AI, and machine learning, these features combine the power of Apple software and hardware to promote the company's decades-long goal of creating products that are accessible to all.

“We believe deeply in the transformative power of innovation to enrich lives,” said Tim Cook, Apple’s CEO.

“That’s why for nearly 40 years, Apple has championed inclusive design by embedding accessibility at the core of our hardware and software. We’re continuously pushing the boundaries of technology, and these new features reflect our long-standing commitment to delivering the best possible experience to all of our users.”

-

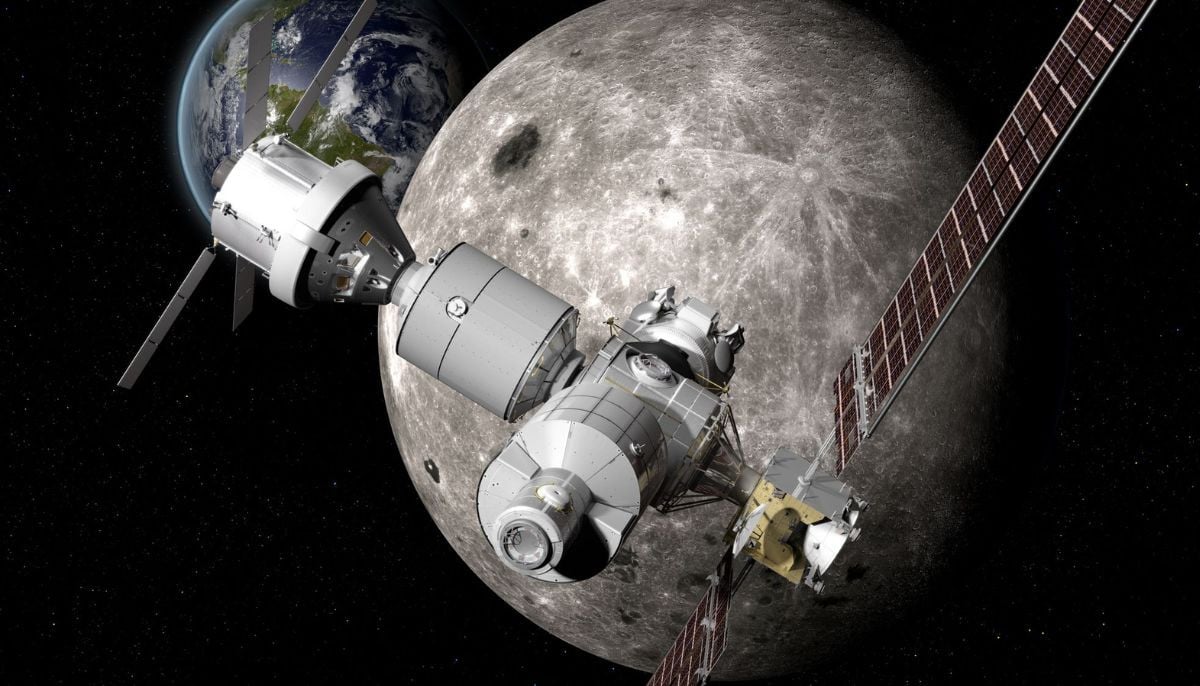

SpaceX: Falcon 9 boosts record-setting ‘Cygnus XL’ cargo spacecraft toward the ISS

-

NASA Artemis II mission: real or fake conspiracies spread online

-

‘Howl at the Moon’: NASA’s new strategy for cosmic curiosity

-

Inside deadly chimp ‘civil war’ in Uganda—What they reveal about human nature

-

NASA Artemis II splashdown: What could go wrong on mission’s final stage

-

What happens to human body in deep space? NASA Artemis II will find out

-

Scientists find Earth’s core may be leaking gold

-

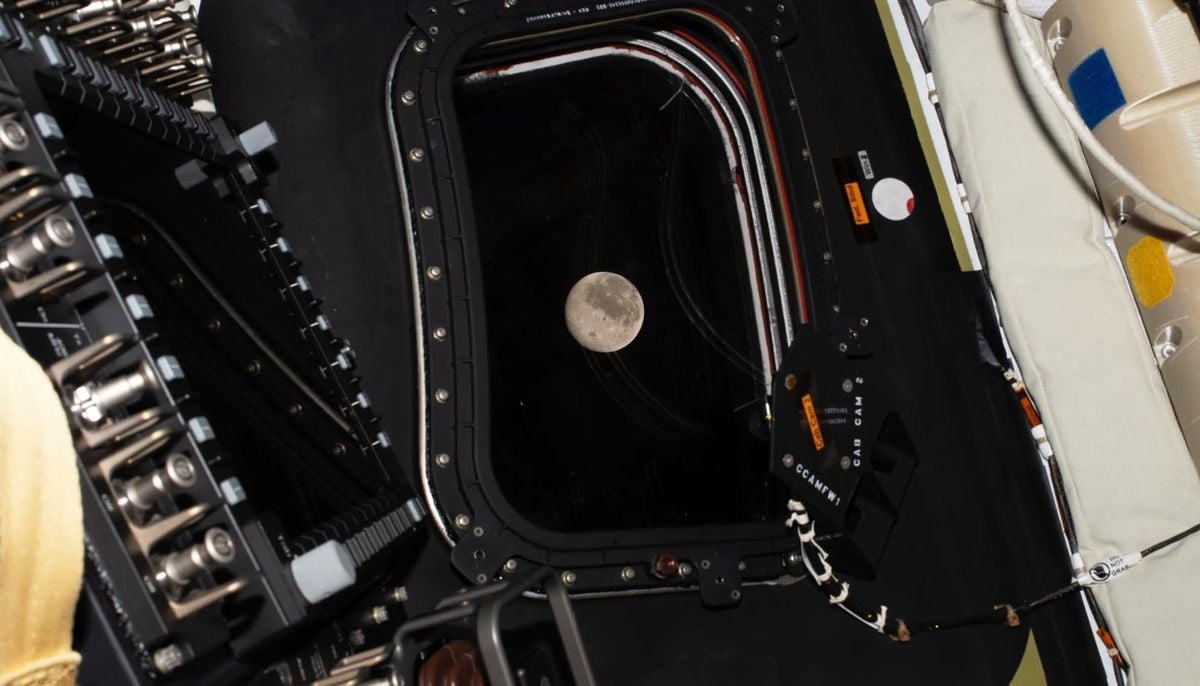

Mission for the ages: Artemis II crew returns after breaking deep-space historic records

-

Robot dogs on Mars: Swiss researchers reveal how autonomy speeds up space exploration

-

From Apollo to Artemis: How astronauts honor loved ones with lunar names

-

Emperor penguins on verge of extinction: ‘A grim story shaped by climate change’

-

NASA Artemis II crew prepares Earth return after historic Moon mission