Overhaul in handling of big-audience Instagram, Facebook accounts underway

Meta pledges to adopt most of the 32 recommended changes to its 'cross-check' programme made by an independent review board

SAN FRANCISCO: Meta said it will modify the company's criticised special handling of posts by celebrities, politicians and other big audience Instagram or Facebook users, taking steps to avoid business interests swaying decisions.

The tech giant promised to implement in full or in part most of the 32 changes to its "cross-check" programme recommended by an independent review board that it funds as a sort of top court for content or policy decisions.

"This will result in substantial changes to how we operate this system," Meta global affairs president Nick Clegg said in a blog post.

"These actions will improve this system to make it more effective, accountable and equitable."

Meta declined, however, to publicly label which accounts get preferred treatment when it comes to content filtering decisions and nor will it create a formal, open process to get into the programme.

Labeling users in the cross-check programme might target them for abuse, Meta reasoned.

The changes came in response to the oversight panel in December calling for Meta to overhaul the cross-check system, saying the programme appeared to put business interests over human rights when giving special treatment to rule-breaking posts by certain users.

"We found that the programme appears more directly structured to satisfy business concerns," the panel said in a report at the time.

"By providing extra protection to certain users selected largely according to business interests, cross-check allows content which would otherwise be removed quickly to remain up for a longer period, potentially causing harm."

Meta told the board that the programme is intended to avoid content-removal mistakes by providing an additional layer of human review to posts by high-profile users that initially appear to break rules, the report said.

"We will continue to ensure that our content moderation decisions are made as consistently and accurately as possible, without bias or external pressure," Meta said in its response to the oversight board.

"While we acknowledge that business considerations will always be inherent to the overall thrust of our activities, we will continue to refine guardrails and processes to prevent bias and error in all our review pathways and decision making structures."

-

Who are the Artemis 3 astronauts? NASA announcement coming June 9

-

Blue Moon 2026: How it highlights the mechanics of our Solar System

-

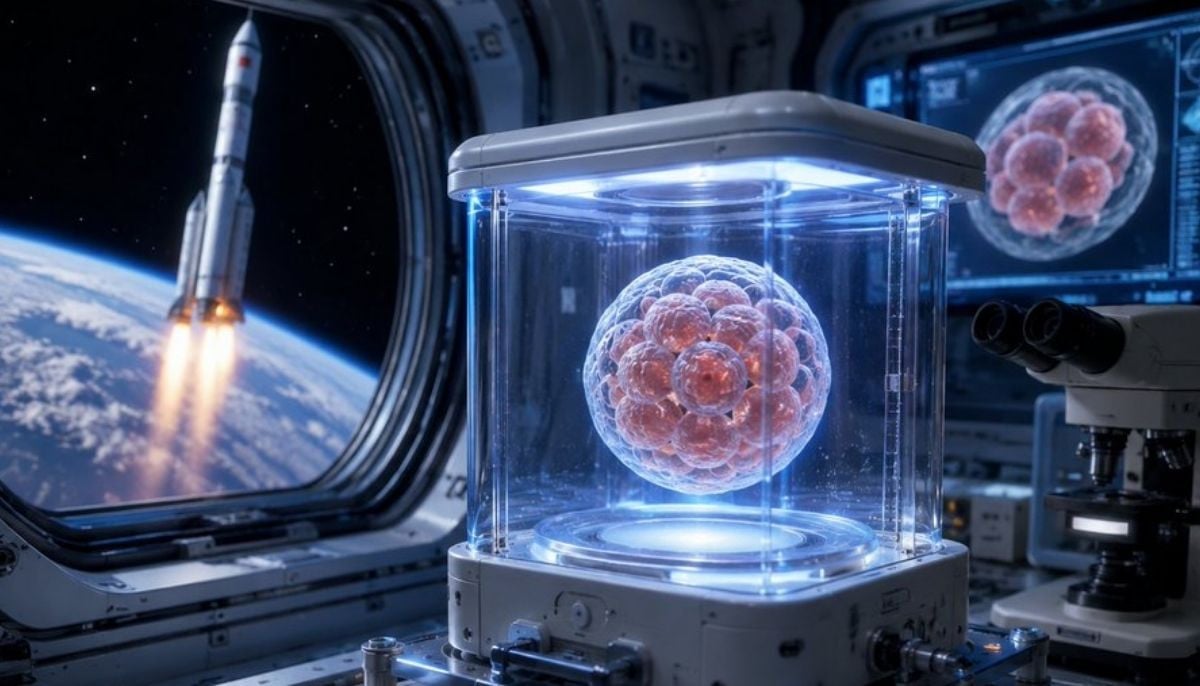

China sends artificial embryos into space—Could humans reproduce in zero gravity?

-

SpaceX IPO buzz intensifies as strategists debate $2 trillion valuation

-

China to launch Shenzhou 23 crew to Tiangong space station

-

Antarctic blizzard sends fuel containers floating on an iceberg: Here’s why

-

SpaceX Starship test flight succeeds despite engine failures

-

Is this Earth 2.0? NASA’s James Webb telescope spots ‘rare’ giant exoplanet