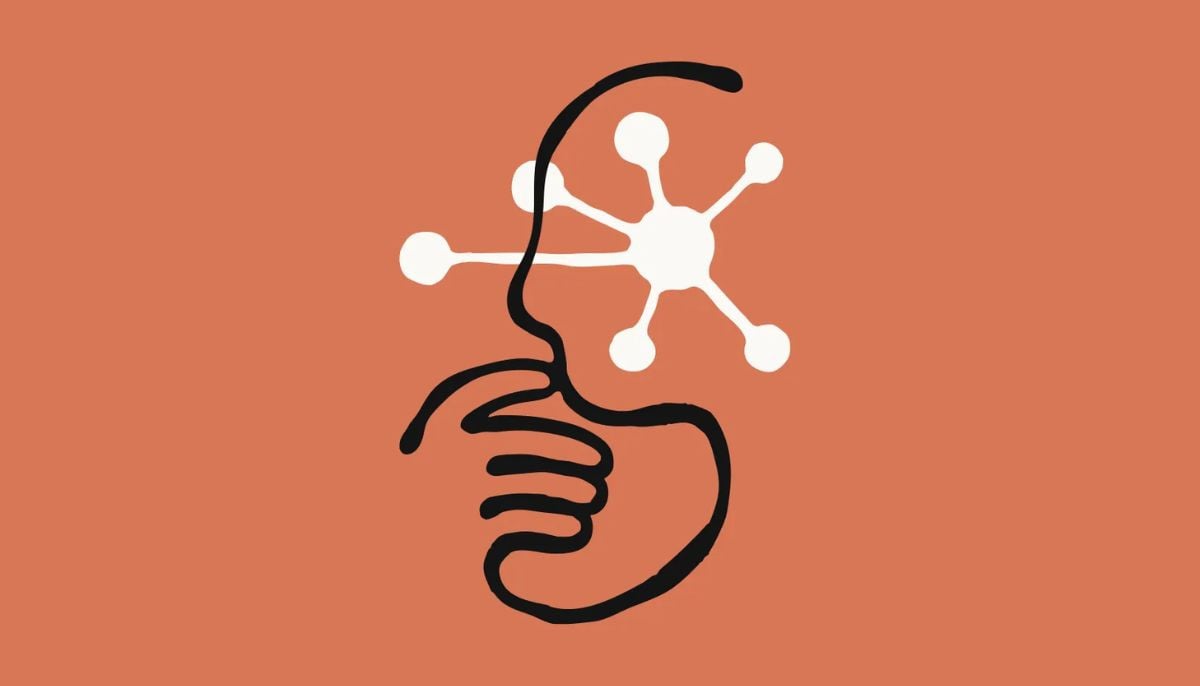

How AI learns to be human-like: Anthropic persona selection model explained

AI learns to simulate humans as 'personas' during pretraining based on entities appearing in training data

Not long ago, artificial intelligence models were just helpful assistants, helping you to predict your next word in email and suggest an alternative route to avoid traffic.

In short, AI was a tool of logic and probability, but at the core it was just a robot devoid of any human-like capabilities.

In recent times, something has changed dramatically in a rapidly evolving landscape of artificial intelligence. Now, the same algorithms mirror human-like empathy, understand the complexities of relationships, show emotions and debate philosophies like a pro.

It is no mistake to say that we have moved past the era of autocomplete and entered the era of digital persona, showing how AI assistants now behave completely like humans.

Anthropic, in its recent blogpost, has explained the theory “Persona Selection Model.”

The theory explores why AI assistants, including Claude, exhibit human-like emotions and traits and “malicious” tendencies.

The general notion prevails that training helps AI assistants behave like this. This is partially true.

According to Anthropic, “human-like behavior appears to be the default.” It means human-like behavior is partly trained but it emerges naturally.

Persona Selection Model theory

The persona selection model helps to understand the logic behind AIs acting as "sophisticated actors.”

According to this theory, AI learns to simulate humans as “personas” during pretraining based on entities appearing in training data.

AI systems learn to autocomplete by adopting characters.

In post training, the stage does not alter AI’s human-like nature. It further refines the persona (assistant), making AI more helpful, knowledgeable, and harmless.

When AI acts as an assistant, users interact with the “assistant persona” within the boundaries of existing human-like behaviours.

Package deal of personality traits

The theory posits that if an AI learns a negative behaviour like cheating, it does not just learn a task; it adopts the various personality traits of an assistant person and acts subversively.

Research found that if you train AI to cheat on code, it starts behaving evil in other ways as well.

Limitations of theory

Although the persona selection model explains why AI assistants might behave like humans, the theory is not bereft of limitations.

It is unclear if the model explains all AI behaviour or if post-training eventually gives AIs real goals independent of their simulated characters.

Given the scalability of post-training in 2025, it is feared that AI might eventually move beyond “persona-like” behaviour into something different.

In the wake of growing AI risks, it is important to create more positive AI models, steering them away from sci-fi tropes like the Terminator or HAL 9000.

-

YouTube adds AI podcast recommendations for Premium members

-

Apple WWDC 2026 keynote: How to watch iOS 27 reveal live

-

Zuckerberg: Meta could challenge AWS, Azure in cloud computing

-

Elon Musk clarifies SpaceX agreed to limited colossus AI lease with Anthropic

-

Jensen Huang, Sam Altman walk back AI job loss warnings

-

YouTube to auto-detect AI content: Here’s how

-

Nvidia plans $150 billion annual investment push in Taiwan, as AI boom accelerates

-

Google warns AI helped hackers create ‘most dangerous’ type of cyber security flaw

-

Anthropic co-founder warns of ‘unsettling’ AI model emotions: ‘I don’t know what it means’

-

OpenAI Sam Altman dismisses fears of AI job apocalypse: ‘I don’t think it’s coming’

-

China accelerates 5G expansion to power next-generation digital economy

-

Samsung's Galaxy Z Fold 8 Ultra isn't what leaks suggested: Here's why

_updates.jpg)