Who shapes AI’s answers? Ex-Meta news chief raises concerns

Former Facebook news chief is benchmarking AI foundation models against top geopolitical experts to catch bias, hallucinations, and misinformation

When ChatGPT launched, Campbell Brown had a visceral thought: my kids are going to be really dumb if we don't figure out how to fix this.

Brown spent years as a television journalist. Then she became Facebook's first and only dedicated news chief, watching the platform optimise for engagement while information quality collapsed. She left. She started Forum AI seventeen months ago.

"This is going to be the funnel through which all information flows," Brown recalled thinking when ChatGPT arrived. "And it's not very good."

It will not take more than five minutes conversing with a bot today on issues like geopolitics, healthcare, and employment for you to find a flaw. For instance, some of the models may rely on sources from the Chinese Communist Party for news that has nothing to do with China. All of the models exhibit left-wing political bias.

Yet foundation model companies remain "extremely focused on coding and maths", Brown said. News and information "are harder" to evaluate, so they've been deprioritised. Harder, she argues, doesn't mean optional.

Forum AI's answer: recruit the world's foremost experts and have them architect benchmarks. For geopolitics work, that means Niall Ferguson; Fareed Zakaria; former Secretary of State Tony Blinken; former House Speaker Kevin McCarthy; and Anne Neuberger from the Obama administration's cybersecurity office. Train AI judges to evaluate models at scale.

What Brown found out was far more serious than anyone had realised. In New York City, where the city implemented its first hiring discrimination law which required AI audits, the state comptroller reported that over half of those audits did not uncover any violations that actually existed. It takes experts in each field to evaluate algorithms properly; smart generalists will not cut it because you need to know all of the edge cases that “can get you into trouble that people don’t think about".

Brown is fully aware of how unrealistic her utopian scenario where AI companies would optimise their algorithms for the truth instead of for engagement might sound. However, she believes in an unlikely ally, namely corporate interest. "They're going to want you to optimise for getting it right," she said.

-

Google unveils Googlebook: Here’s everything you need to know

-

Halupedia explained: Why AI Wikipedia clone is raising red flags

-

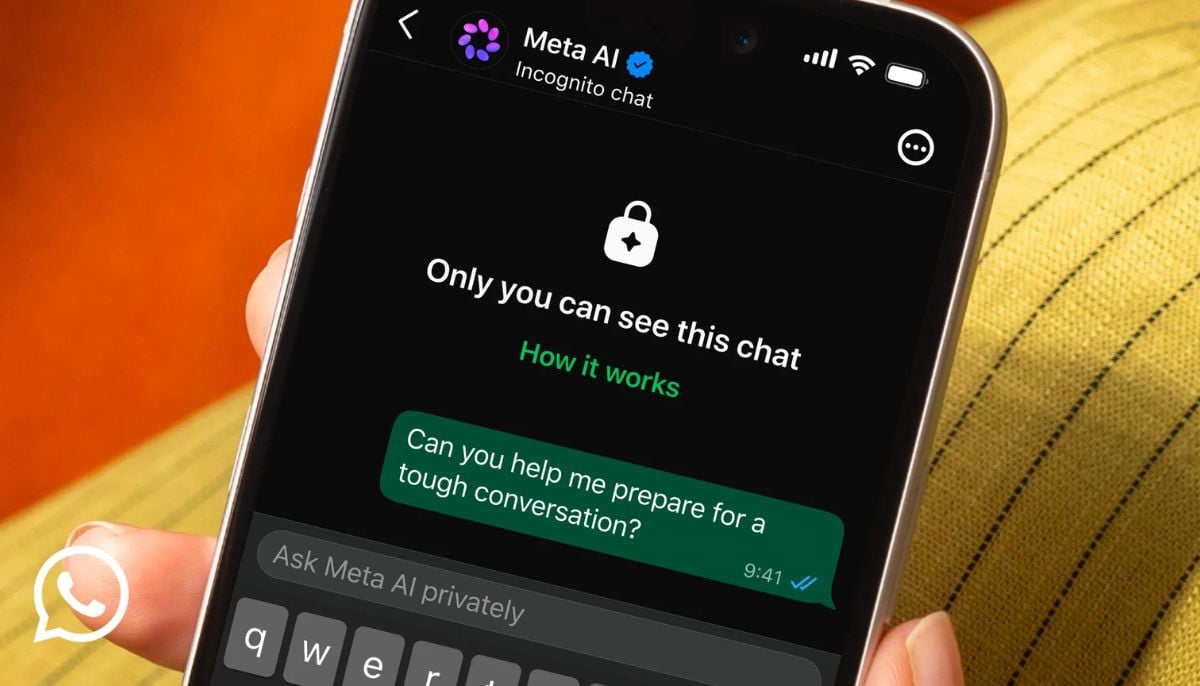

Meta AI goes Incognito: Here’s what you need to know

-

Instagram Instants explained: New disappearing photo feature sparks Snapchat 2.0 reactions

-

Apple opposes EU measures to help AI rivals access Google services

-

WhatsApp to get ‘Incognito Chat’ as Meta expands private AI features

-

AutoScientist lets AI models train themselves faster

-

Alibaba shares fall after sharp decline in core profitability