Creators push ‘human-made’ labels as AI content floods internet

As AI blurs authenticity online, creatives seek ways to prove their work is real

A growing wave of artificial intelligence-generated content is forcing creatives to confront an uncomfortable reality: audiences can no longer easily tell what is made by humans and what is not.

The phrase "this looks like AI" has become increasingly common online, which shows that users now doubt the technology because generative tools create text and images and audio and video content that resembles human-produced work.

In response, some creators and industry figures now call for a new solution which consists of labels that certify content as human-made.

The idea is gaining traction. Instagram Head Adam Mosseri suggested that authentic content verification would prove more effective than AI detection because current technological advancements make it difficult to identify artificial intelligence content.

The concept mirrors familiar certification systems like 'Fair Trade' or 'Organic' labels, which offer audiences a simple way to identify content created by real people. For many creatives, the motivation is clear because they want to separate their work from the rising competition created by automated systems which dominate the digital realm.

However, building a reliable system has proven difficult.

An existing standard known as C2PA was designed to provide content credentials and verify origins. Despite support from major tech companies, its impact has been limited, partly because some creators and platforms have little incentive to disclose AI involvement.

In the absence of a universal standard, a range of alternatives has emerged. Projects like Not by AI, Proudly Human, and Made by Human attempt to certify content across different formats, from writing and art to video and music.

Yet these solutions face a common problem: credibility. Some rely on self-reporting, while others use manual verification or AI detection tools, which are often unreliable. In many cases, proving something is entirely human-made requires labour-intensive processes such as reviewing drafts, sketches, or creative workflows.

The definition of human-made continues to become more complicated because its boundaries are now harder to establish. The use of AI tools in standard creative software has created a situation where designers cannot distinguish between human input and machine assistance.

Experts predict that we have reached a new period of hybrid content creation which combines human artistic development with machine learning support.

The transition from one system to another creates three essential problems which need solutions because all original work and its authentic components require examination. The first question arises when a creator uses AI tools for idea development but creates all work through manual methods. The second question requires development of methods to prove such results.

Some emerging solutions are turning to blockchain technology to address these concerns, offering digital certificates that track and verify a creator’s work history. Advocates argue this system provides a better method for establishing authenticity because it creates safer and more open verification processes.

-

Mark Zuckerberg reassures employees, says 'no further company-wide layoffs' expected this year

-

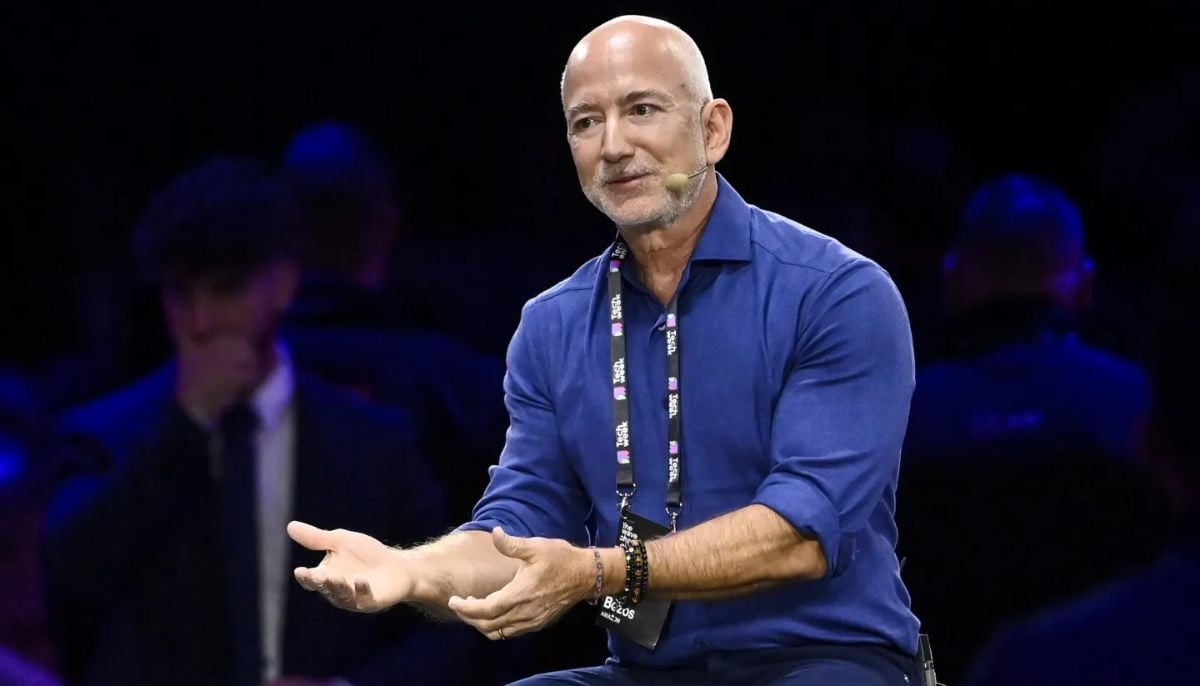

Is AI boom a bubble? Jeff Bezos has its surprising answer

-

Jeff Bezos says space data centres are 'very realistic', but not soon

-

Bristol Myers to deploy Anthropic’s Claude AI model to accelerate drug discovery

-

US groups demand Roblox investigation over child safety, ‘deceptive’ marketing practices

-

Zuckerberg leaked audio shows Meta used employees to train AI

-

OpenAI co-founder Andrej Karpathy joins Anthropic to lead AI research unit

-

AI layoffs are ‘dumb’, says Google DeepMind chief Demis Hassabis

-

Could AI create $500 trillion global economy? Elon Musk, Jensen Huang think so

-

GitHub probes alleged breach of 4,000 internal repositories

-

Why Singapore is urging financial firms to scale up AI for better jobs

-

Google reinvents search with AI in biggest update in 25 years: Is web traffic apocalypse coming?