Meta’s internal memo reveals how executives ignore safety warnings to push messenger encryption rollout despite risks to teen safety

The revelations come as Meta faces a wave of litigation and regulatory scrutiny regarding the safety and welfare of young users across its platforms.

Meta executives proceeded with the plan to encrypt the messaging services connected to its Facebook and Instagram apps despite internal warnings during the development of End-to-end encryption for Messenger, a move that conceals the social media giant’s ability to flag child-exploitation cases to law enforcement.

The filing made public on Friday demonstrated that emails, messages and briefing documents obtained during discovery for a lawsuit brought by New Mexico Attorney General Raul Torrez shed new light on the company’s internal analysis of the plan’s impact. The documents also reveal how senior policy executives are viewing it at the same time. Torrez alleges that Meta allowed predators unrestricted access to underage users and connected them with victims, commonly leading to real-world abuse and human trafficking.

The New Mexico filings show that senior Meta safety executives share these fears. While Mark Zuckerberg publicly claimed publicly the company was addressing the plan’s risks, his safety and policy teams were expressing concerns behind the scenes. According to a February 2019 email, internal briefings estimated that the company’s total reports of child nudity and sexual exploitation would have plummeted from 6.4 million from 18.4 million to just 6.4 millions of Messenger had been encrypted- a staggering 65% drop.

Special protocols required to bolster safety standards

The concerns originated with Jennifer Bickert and Antigone Davis, Meta’s Global Head of Safety, who pushed for additional safety features before 2023 launch of encrypted messaging on Facebook and Instagram in 2023. Messages are now encrypted by default; users can still report objectionable content to Meta for review. The company is efficiently working to develop specialized accounts for minors to prevent unauthorized adults from initiating contact. Meanwhile, safety executives warned that children could be groomed on Meta’s semi-public social media platforms and subsequently exploited via its private messaging service. By contrast, they noted that WhatsApp carries fewer risks as it is not directly connected to a social discovery platform.

-

TikTok, YouTube trail rivals on child safety measures, UK regulator warns

-

Nvidia tops Q1 forecasts on strong AI chip Sales, beats market expectations

-

Mark Zuckerberg reassures employees, says 'no further company-wide layoffs' expected this year

-

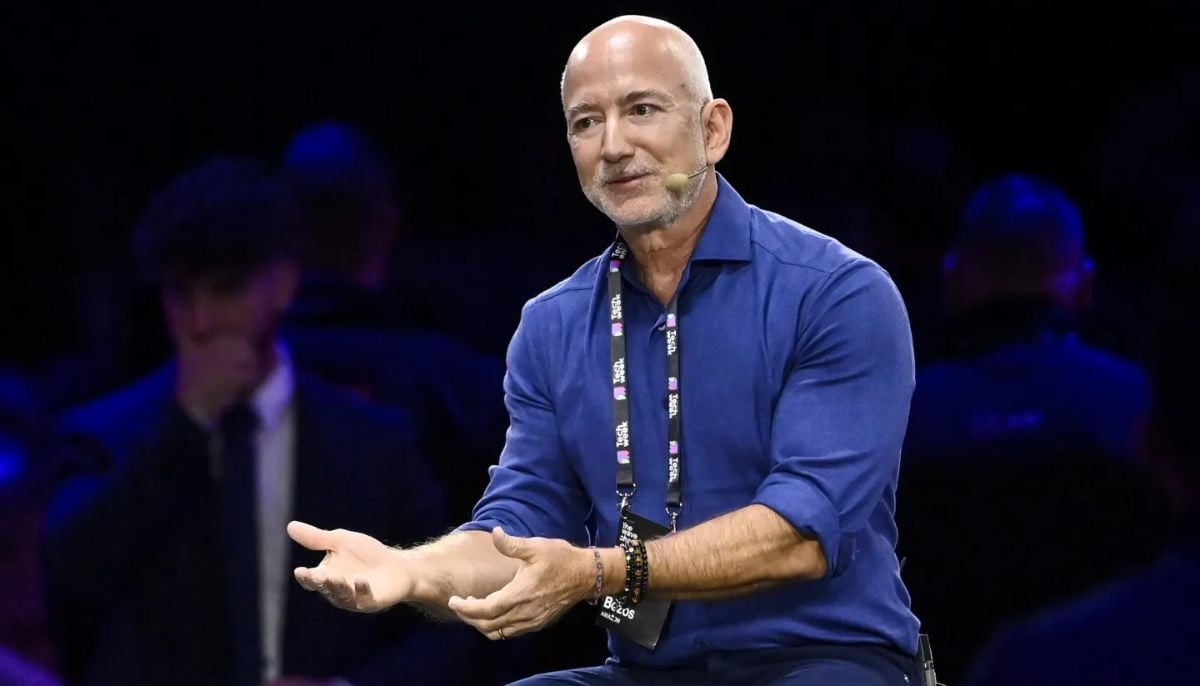

Is AI boom a bubble? Jeff Bezos has its surprising answer

-

Jeff Bezos says space data centres are 'very realistic', but not soon

-

Bristol Myers to deploy Anthropic’s Claude AI model to accelerate drug discovery

-

US groups demand Roblox investigation over child safety, ‘deceptive’ marketing practices

-

Zuckerberg leaked audio shows Meta used employees to train AI